From Bare Metal to Hypervisor

One physical server. Three drives in RAID 5. Proxmox VE live and reachable on the school LAN — in a single week.

HP ML350p Gen8

Drives in RAID 5

Total storage

DDR3 ECC RAM

Instructor: Anthony Pena · [email protected]

What Week 1 required us to do

🎯 Project objective

Take an enterprise tower server straight off the shelf, identify and document every component, build a fault-tolerant storage array, and install a hypervisor that's reachable from the school LAN.

Deliverables

- Full hardware inventory with part numbers and datasheets

- RAID array sized for the four planned VMs

- Live Proxmox VE host on a static management IP

- Screenshots proving each milestone

📋 The build sequence

- Part 1 · Flash a USB drive with the Proxmox ISO using Rufus

- Part 2 · Enter BIOS, set USB boot priority

- Part 3 · Build the RAID array in the controller utility

- Part 4 · Install Proxmox VE on the array · verify the dashboard

- Part 5 · Upload ISO images to Proxmox storage

Constraint: every IP we used had to come from instructor — the school LAN 10.10.0.0/16 is shared with other teams.

HP ProLiant ML350p Gen8 tower server

Asset details

| Manufacturer | HP |

| Model | ProLiant ML350p Gen8 |

| Product ID | 736983-S01 |

| Serial # | 2M251705M9 |

| Form factor | 5U Tower (rack convertible) |

| BIOS | P72 · 08/02/2014 |

| Chassis | Hot-plug drives, redundant PSU, hot-plug fans |

| Management | iLO 4 (dedicated RJ-45 port) |

Why this platform fits

- Enterprise-grade hardware — hot-plug drives, ECC RAM, redundant PSUs — built for 24/7 lab use

- iLO 4 lets us install and operate without ever touching the physical machine

- Smart Array P420i gives us hardware RAID — faster than software RAID and survives a host reinstall

- Four onboard 1 GbE NICs give us room to segment traffic in later weeks

- Two CPU sockets and 24 DIMM slots = headroom for future expansion

What's inside

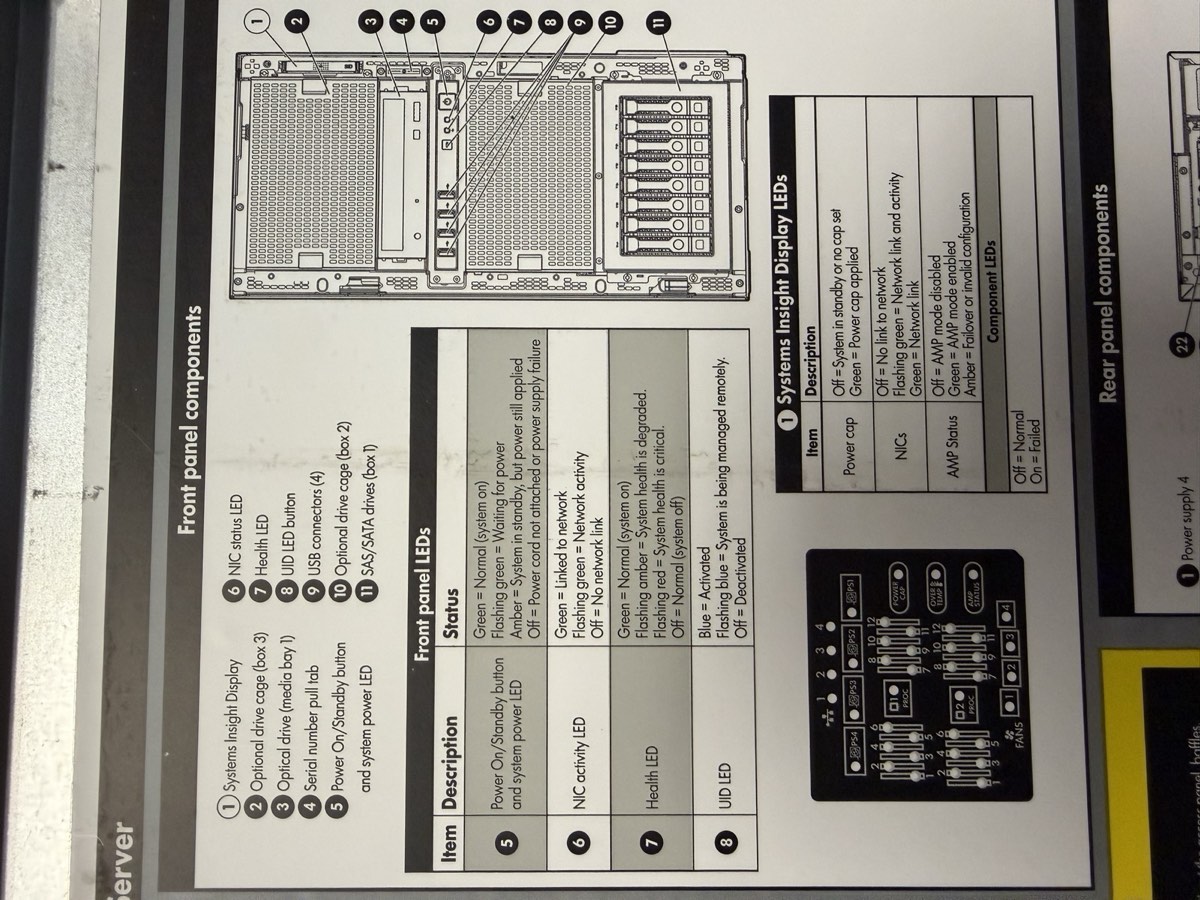

Front panel — Systems Insight Display, drive cage, USB ports, serial pull-tab, power button

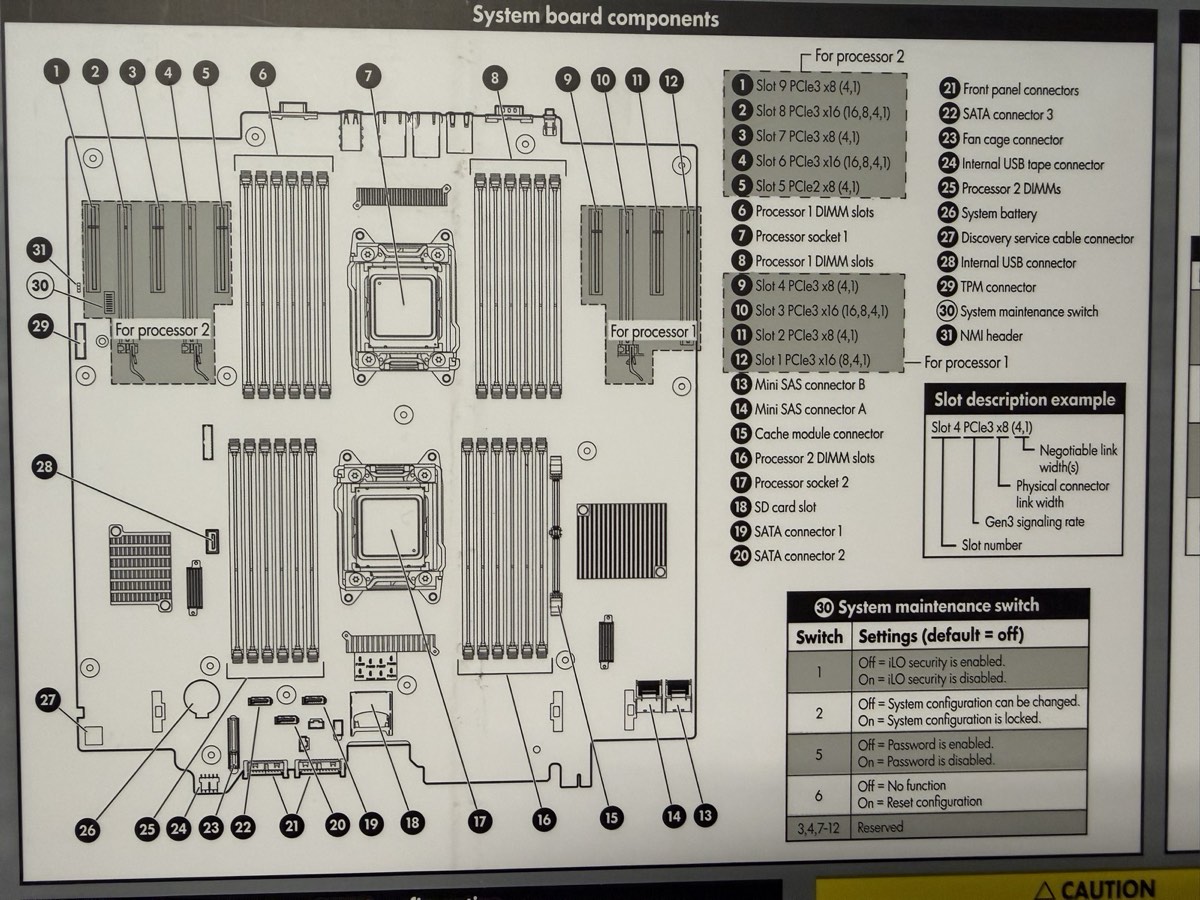

System board — 2 CPU sockets, 24 DIMM slots, P420i RAID controller, PCIe risers

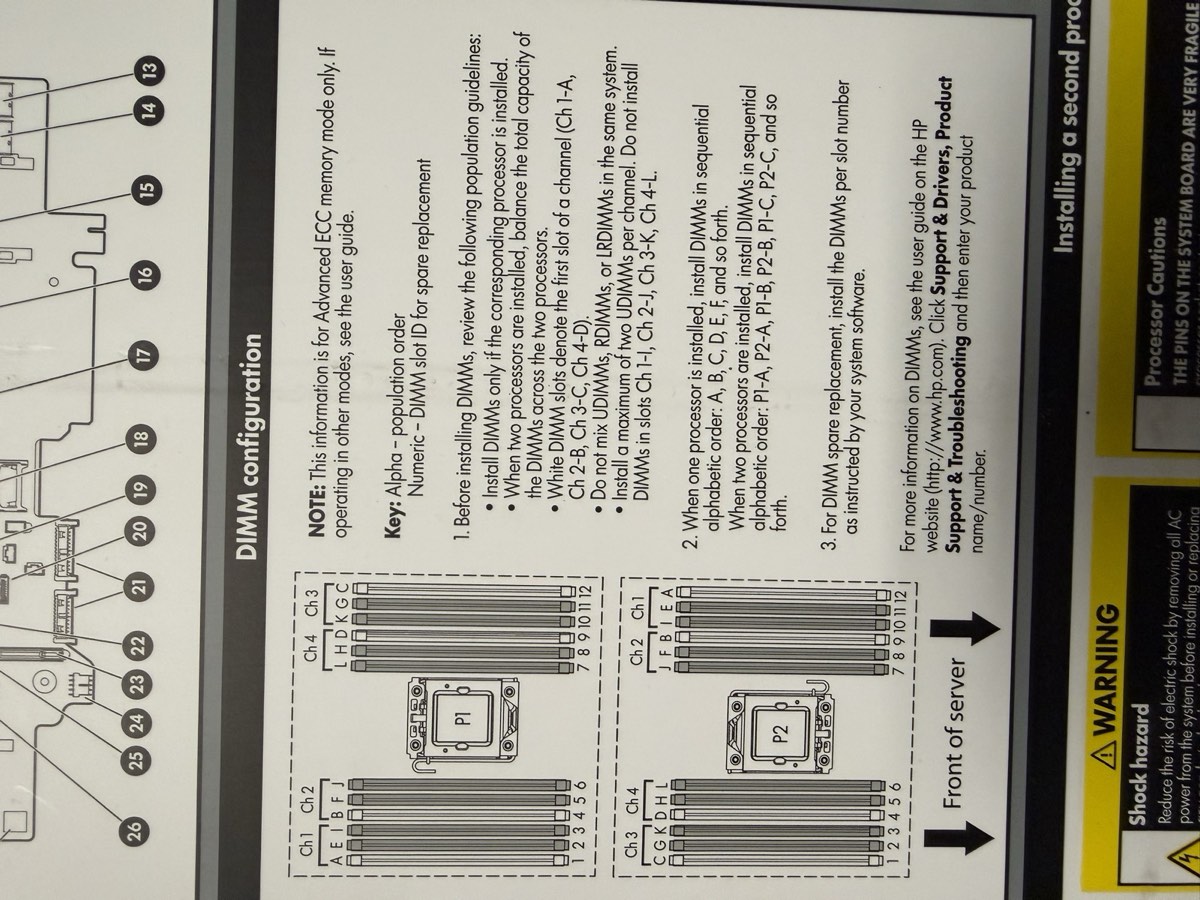

DIMM population chart — white slots first, matched pairs, balanced across channels

Every part matched to a datasheet

RAM · 4× 8 GB

SK Hynix HMT41GR7BFR4A-PB · PC3L-12800R · DDR3L-1600 ECC RDIMM · 1.35 V

Drive 1 · WD Blue

WD10EZEX · 1 TB · 7200 RPM · 64 MB cache · SATA · 2018

Drive 2 · WD RE3

WD1002FBYS · 1 TB · 7200 RPM · 32 MB cache · SATA · 2010

PSU · 2× 460 W 1+1

HP HSTNS-PL14 · Gold Common Slot · p/n 511777-001

Additional: 1× Xeon E5-2609 v2 (socket 1) · 3× 1 TB SATA drives in Bays 1–3 (3 TB total) · P420i RAID controller onboard · iLO 4 management

The machine, fully documented

Compute

| CPU | 1× Xeon E5-2609 v2 |

| Cores / threads | 4C / 4T (no HT) |

| Base clock | 2.50 GHz (no turbo) |

| L3 cache | 10 MB |

| Socket 2 | empty |

| VT-x / VT-d | ✓ |

Memory

| Installed | 32 GB = 4× 8 GB |

| Type | DDR3L-1600 ECC RDIMM |

| Effective | 1333 MT/s (IMC cap) |

| Slots used | 4 of 24 · quad-channel ✓ |

| NUMA nodes | 1 (single CPU) |

Storage

| Controller | Smart Array P420i |

| Firmware | 8.50.66.00 |

| Cache | FBWC capacitor (likely 512 MB) |

| Drives | 3× 1 TB SATA 3.5" (3 TB total) |

| Bay locations | Port 11, Box 1, Bays 1–3 |

Networking

| Onboard NIC | HP 331i (Broadcom BCM5719) |

| Ports | 4× 1 GbE |

| PXE / SR-IOV | ✓ / ✓ |

| Remote mgmt | iLO 4 (dedicated RJ-45) |

Power & Cooling

| PSUs | 2× 460 W 1+1 |

| Model | HP HSTNS-PL14 Gold |

| Input | 100–240 V · 50/60 Hz |

| Fans | 4 hot-plug redundant |

Firmware

| BIOS | P72 · 08/02/2014 |

| Bootblock | 03/05/2013 |

| SATA Option ROM | v2.00.CO2 (2011) |

| NIC Boot Agent | Broadcom NetXtreme (2014) |

| Boot mode | Legacy |

Five steps from bare metal to live hypervisor

| # | Step | Where |

|---|---|---|

| 1 | Flash USB with Proxmox ISO | Rufus on school PC |

| 2 | Set USB as primary boot device | Server BIOS |

| 3 | Build RAID 5 array on 3 drives | P420i ORCA |

| 4 | Install Proxmox VE + verify dashboard | Console + browser :8006 |

| 5 | Upload ISO images to Proxmox | Web UI → local storage |

Tools and assets we needed

- USB drive (4 GB minimum, used DD-mode flash so existing data is wiped)

- Rufus 4.7 from

\\itsdc3\its - Proxmox VE 6.4-1 ISO (later upgraded to 8.2.2)

- Keyboard + monitor for console access

- Static IP from instructor on

10.10.0.0/16 - Patch cable to school LAN port

Build the bootable installer

Procedure

- 1Plug the USB drive into a school PC

- 2Open File Explorer → type

\\itsdc3\itsin the address bar - 3Open Rufus 4.7

- 4Under Device, select your USB drive (check the "list USB devices" box if needed)

- 5Click SELECT → navigate to

\\itsdc3\its→ pickProxmox 6.4-1 ISO - 6Partition scheme: MBR for Legacy BIOS, GPT for UEFI

- 7File system: FAT32

- 8Click START

- 9When prompted, choose DD mode — required for Proxmox

- 10Wait for Rufus to finish, then safely eject

Why DD mode matters

Proxmox's installer image is a hybrid ISO — it expects to be written byte-for-byte to the USB, not extracted as files. ISO mode (the default) leaves the bootloader in a state where the server can't find the installer.

If you forget DD mode, the symptom is a USB that BIOS recognizes as bootable but that drops to a black screen or "no operating system found" right after POST.

Output

A bootable USB containing the Proxmox VE installer. Anything that was previously on the drive is gone.

⚠ Double-check the device dropdown before clicking START — Rufus will happily wipe the wrong drive.

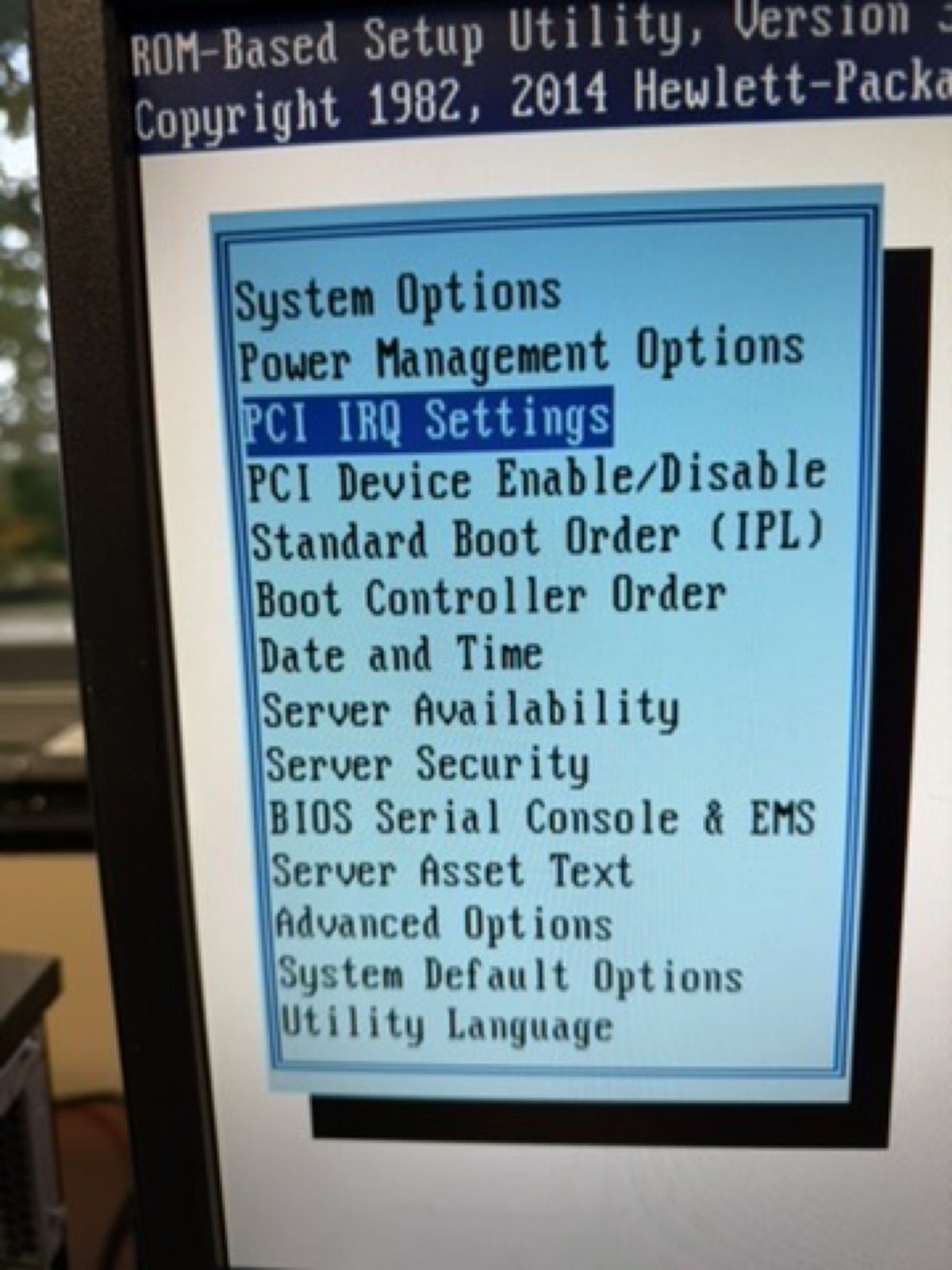

Get the server to boot from the USB

On the HP ML350p Gen8

- 1Plug keyboard + monitor into the server

- 2Power on, watch for the HP splash screen

- 3Press F9 repeatedly for RBSU (ROM-Based Setup Utility)

- 4Or, simpler: press F11 at POST for the one-time Boot Menu override

- 5From the boot menu, pick the USB device — the server boots straight into the Proxmox installer

F2 is for Dell servers; HP uses F9 / F10 / F11. Always check the on-screen prompt during POST — different vendors map different keys.

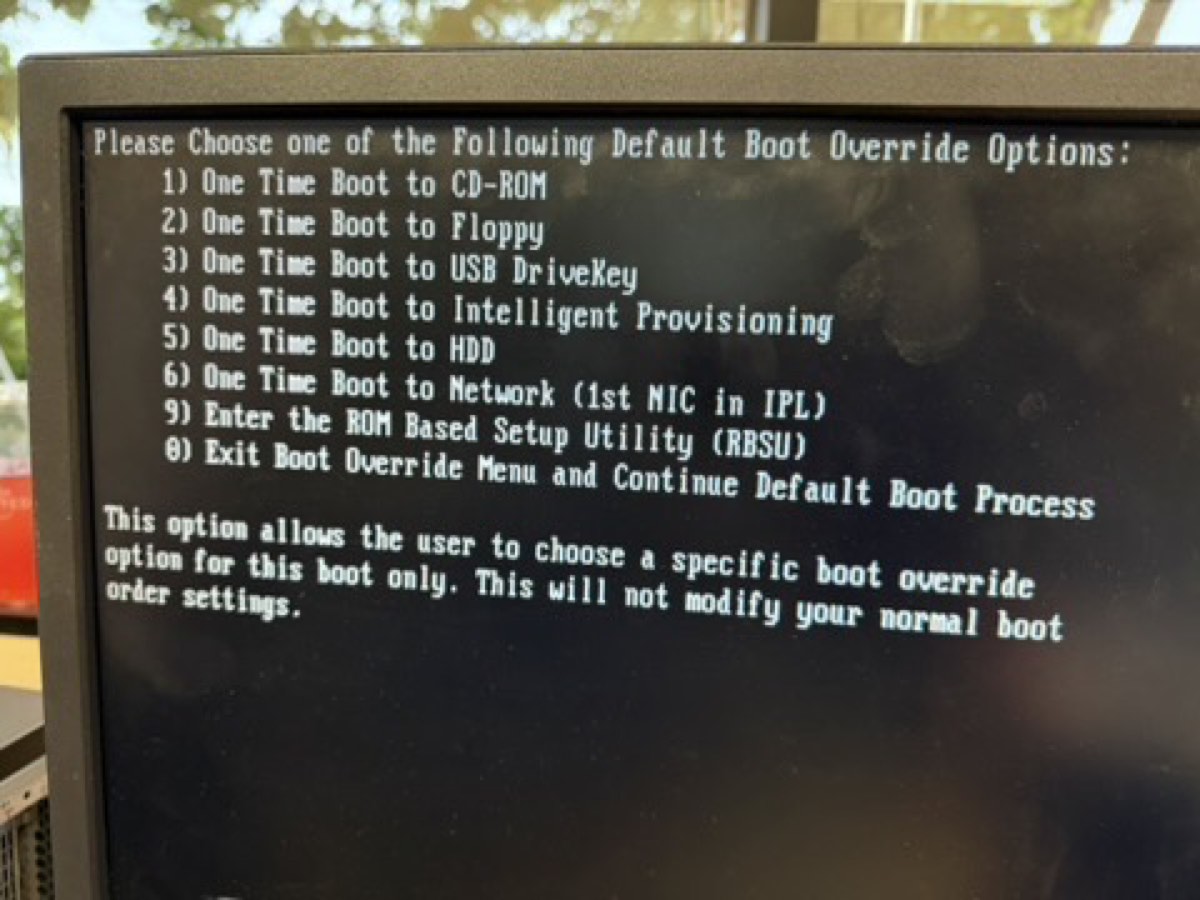

↑ POST prompt showing F9 (RBSU), F10 (Intelligent Provisioning), F11 (Boot Menu)

↑ F11 boot override — pick the USB or CD-ROM (iLO virtual media)

Three drives, one fault-tolerant array

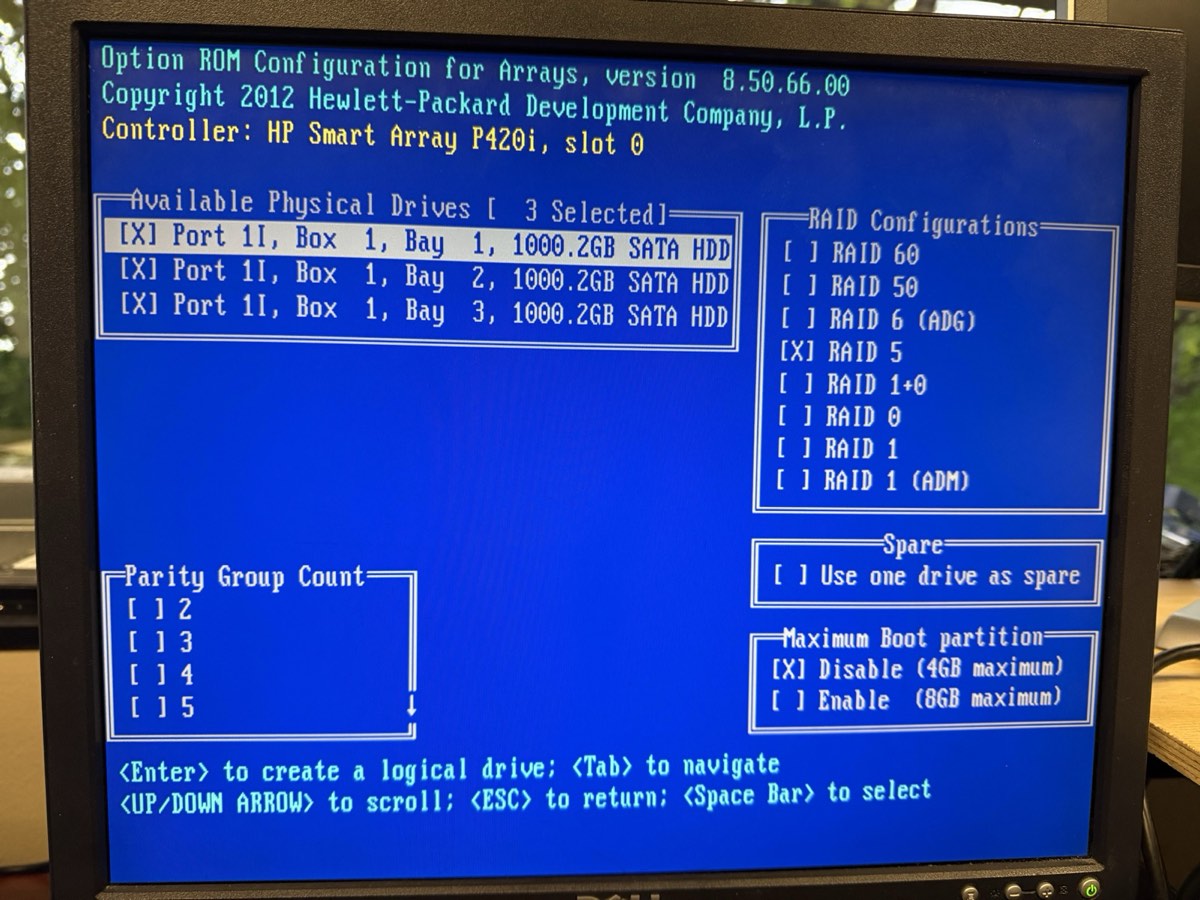

3 drives marked [X] · RAID 5 selected · Max Boot Partition disabled (4 GB)

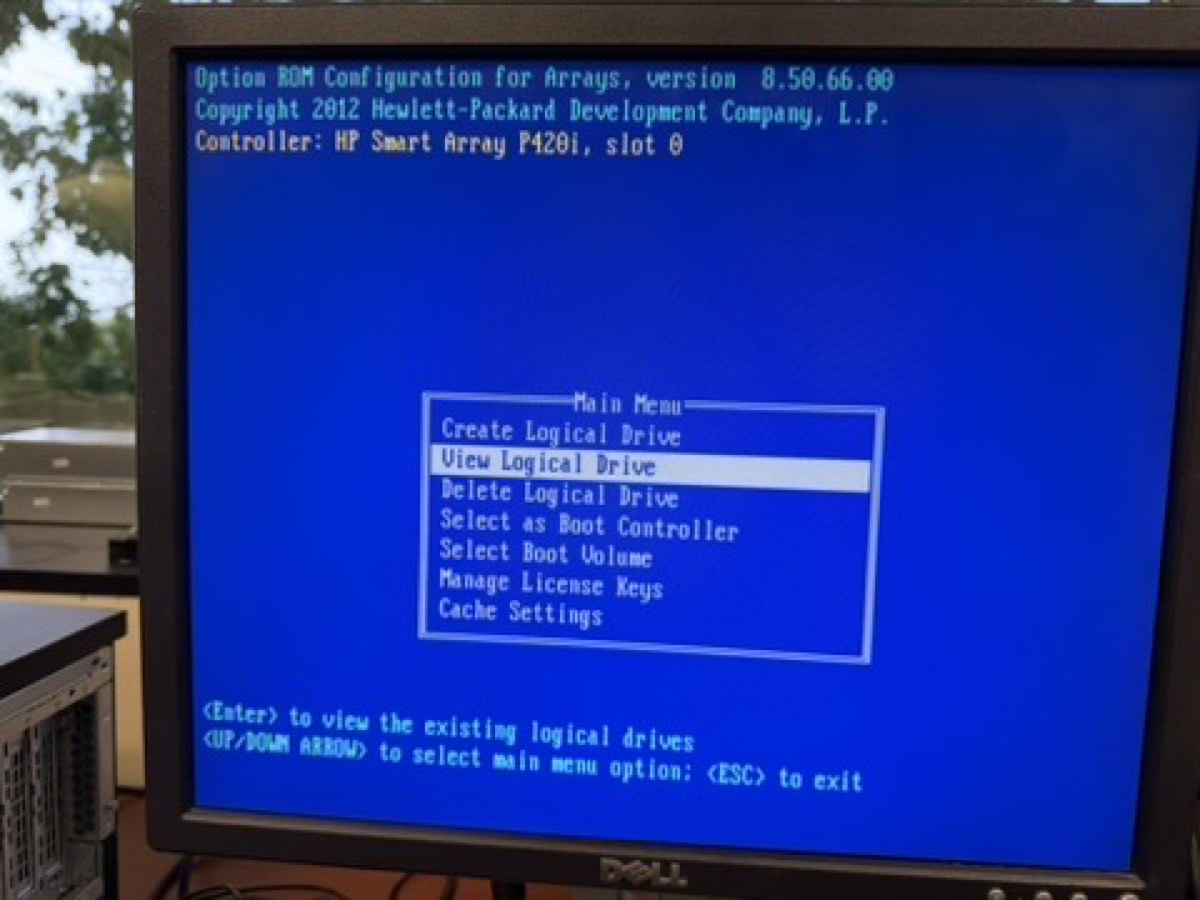

↑ "View Logical Drive" is now selectable — confirms the array exists

Procedure

- 1Reboot, watch POST for the Smart Array banner

- 2Press F8 to enter ORCA (Option ROM Configuration for Arrays)

- 3If a stale array exists: Delete Logical Drive first erases data

- 4Choose Create Logical Drive

- 5Press Space on each of the 3 drives at Port 11, Box 1, Bays 1–3

- 6Select RAID 5; defaults: 256 KiB stripe, Accelerator Enable

- 7Press Enter to commit, F8 to save, Esc to exit

Result

1 logical drive · 3 TB total (one drive's capacity reserved for parity) · parity init runs in the background. Array survives one drive failure.

ⓘ HP ORCA auto-starts parity initialization — no separate F2 → Initialize step like Dell PERC controllers require. The lab doc's "Initialize" step is for Dell hardware.

⚠ Drives are mismatched ages (2010 / 2018). Run weekly SMART checks; the oldest drive is statistically most likely to fail first.

Trading some space for one drive of forgiveness

Options we considered

| Level | Usable | Protection | Verdict |

|---|---|---|---|

| RAID 0 | 3 TB | None | Rejected |

| RAID 1 (mirror) | 1 TB | 1 drive fail | Too little space |

| RAID 5 | 3 TB | 1 drive fail | Chosen ✓ |

| RAID 6 | 1 TB | 2 drive fails | Needs 4 drives |

Why this was the right call

- 3 drives = RAID 5 is the natural fit — minimum drive count met, best space efficiency for the disks we have

- Survives a single drive failure with no data loss — important given our mixed-age drive pool

- 3 TB total comfortably hosts 4 planned VMs plus ISOs, snapshots, and backups

- Hardware RAID on the P420i is faster than ZFS on this generation, with no HBA flashing required

From installer prompt to running hypervisor

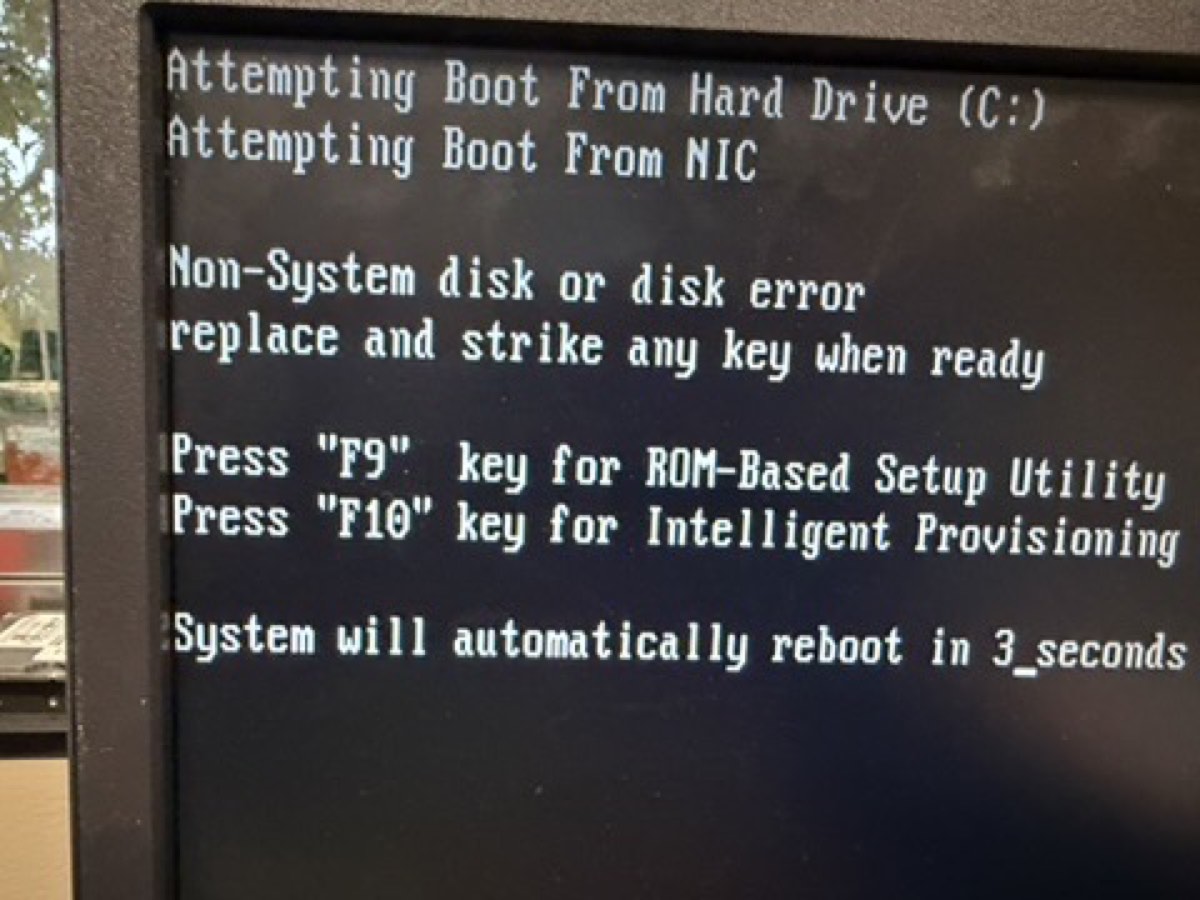

↑ Pre-install POST: array detected, "Non-System disk" — exactly what we want

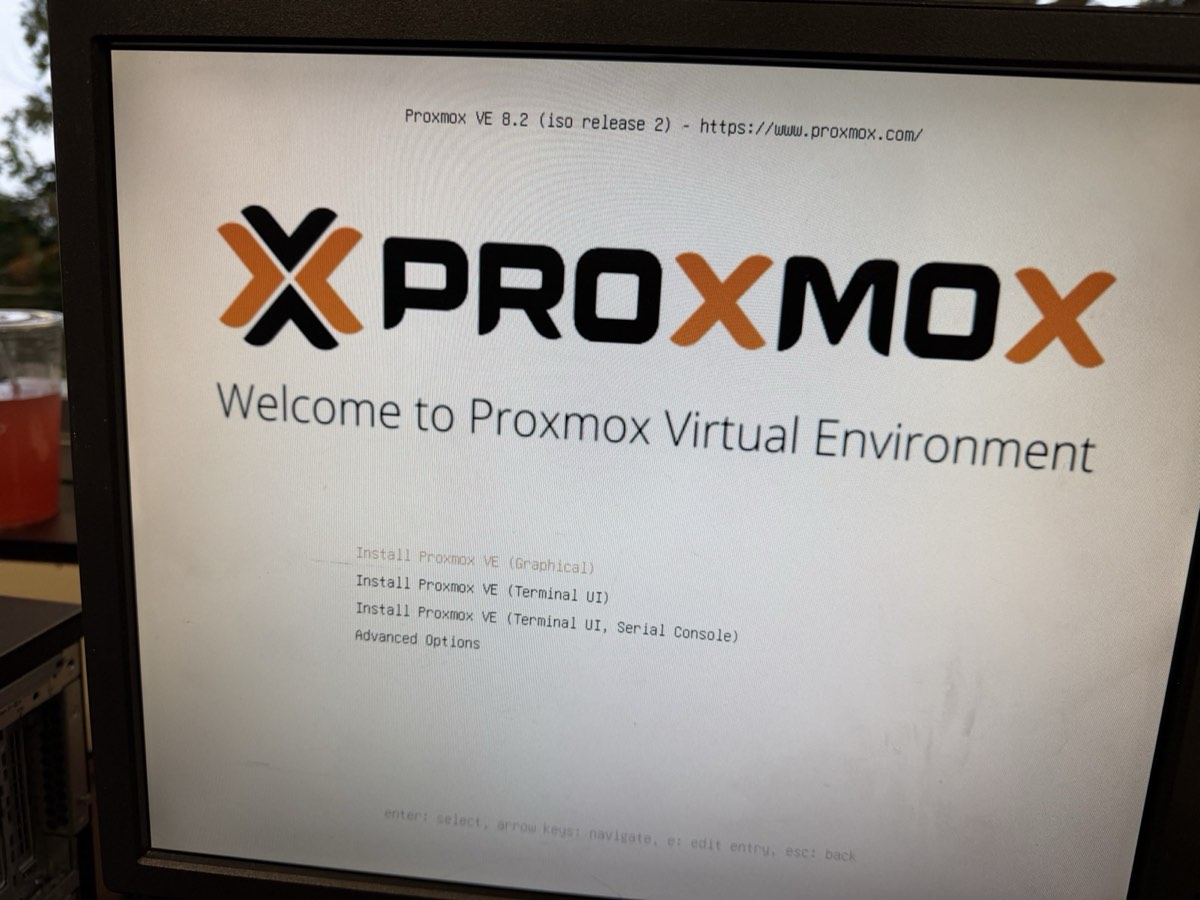

↑ Picked Install Proxmox VE (Graphical)

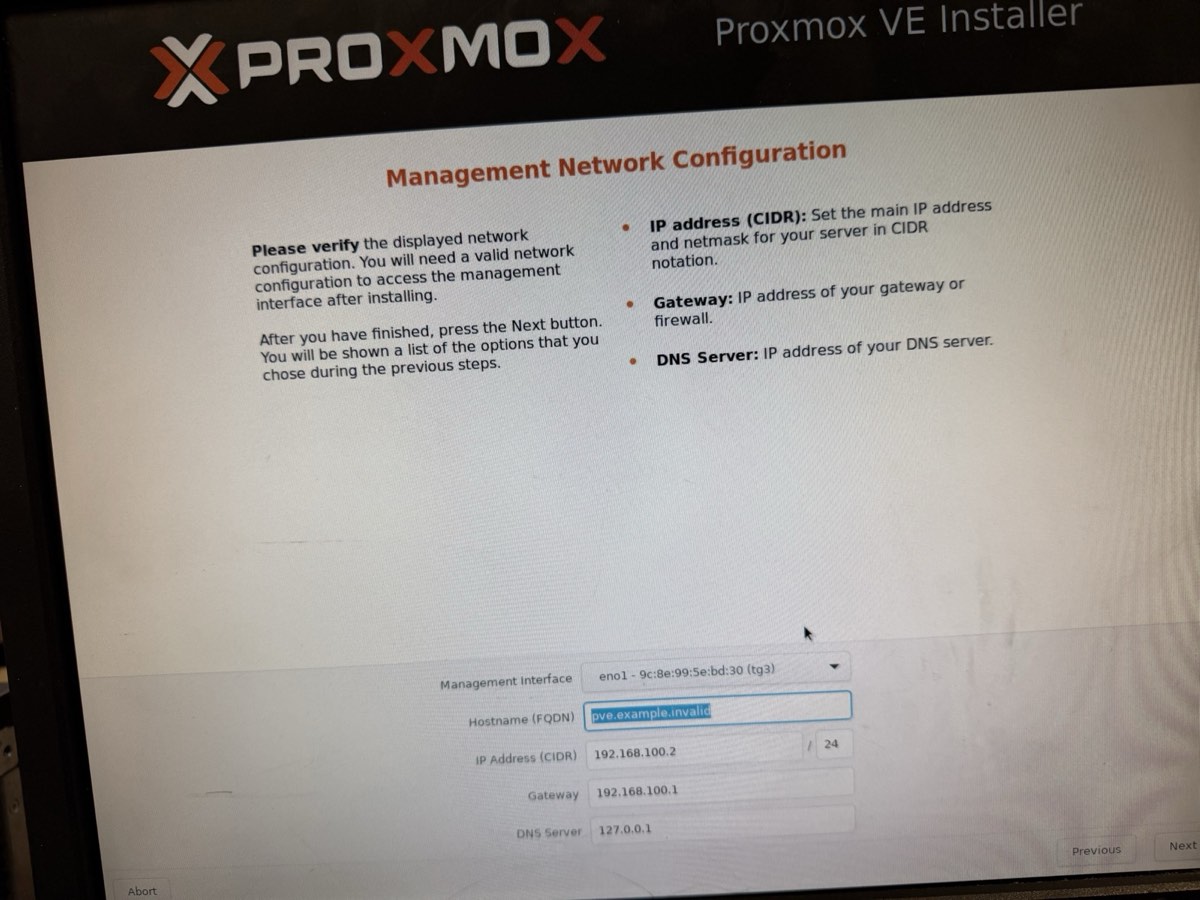

↑ Management network on eno1

Installer values

| Filesystem | ext4 on /dev/sda |

| Target disk | 3 TB RAID 5 array |

| Country / TZ | United States / America/Chicago |

| Hostname | tctmachine |

| IP (CIDR) | 10.10.10.10/16 |

| Gateway | 10.10.10.1 |

| DNS | 1.1.1.1 (Cloudflare) |

ⓘ Used /16 instead of the lab doc's /24 example — covers the entire 10.10.0.0/16 school subnet so the host treats every 10.10.x.y as local.

What happens during install

- Installer detects the RAID array as a single disk (the controller hides the parity)

- Wipes and partitions

/dev/sdaautomatically - Lays down the Debian-based base system + Proxmox kernel

- Installs the web stack on port 8006

- Reboots — about 5 minutes total

Remove the USB before the reboot, or the server will boot back into the installer.

Reach the Proxmox dashboard from the school LAN

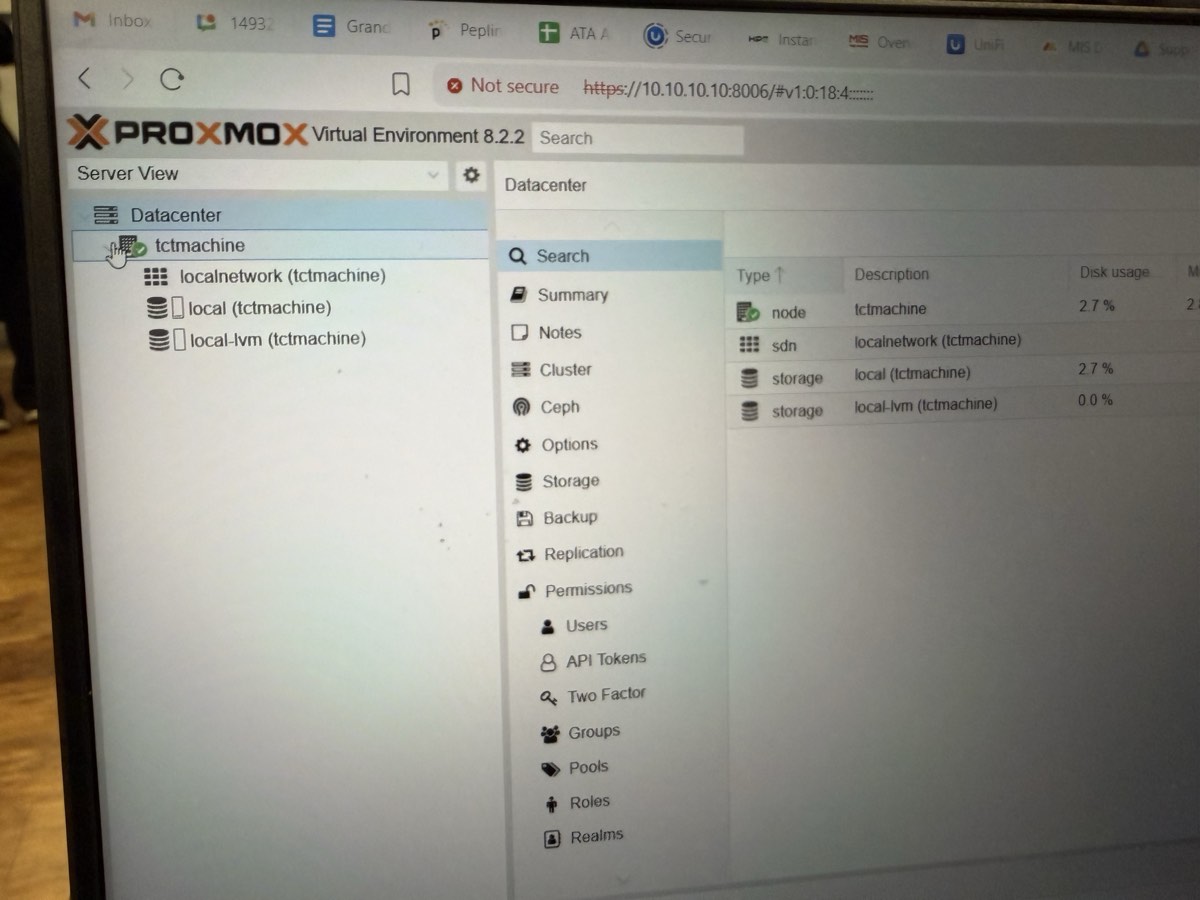

↑ https://10.10.10.10:8006 from a laptop on the school LAN. Logged in as root.

What success looks like

- ✓ Proxmox VE 8.2.2 running

- ✓ Node

tctmachinevisible under Datacenter - ✓

localstorage mounted · 2.7% used (base system) - ✓

local-lvmthin pool ready · 0.0% used (waiting for VMs) - ✓ Internet reachable via

1.1.1.1 - ✓ TLS, login, summary widgets all functional

Quick checks from the host shell

# confirm IP + gateway ip addr show eno1 ip route # confirm internet + DNS ping -c 2 1.1.1.1 ping -c 2 google.com # confirm storage pvesm status

Stage installer ISOs for the Week 2 VMs

Procedure

- 1From a school desktop, open

https://10.10.10.10:8006 - 2Log in as

root - 3Sidebar tree: Datacenter →

tctmachine→ local (notlocal-lvm) - 4Center pane: click the ISO Images tab

- 5Click Upload

- 6Click Select File… → navigate to

\\itsdc3\its - 7Pick an ISO → confirm Upload

- 8Repeat for each ISO planned for Week 2

Why this happens now

Proxmox needs installer media available before any VM can be created. The ISOs sit in local storage and get attached as virtual CD-ROMs to new VMs in Week 2.

ISOs we staged

- Ubuntu Server — for the Jump Box and Linux Server

- Windows Server — for the AD / DNS / DHCP / IIS roles

- Windows 10 — client machine for testing

- Ubuntu Desktop — Linux client for testing

Storage path on the host: /var/lib/vz/template/iso/

✓ With ISOs staged, Week 2 can start immediately — no waiting on uploads.

Proxmox VE 8.2.2 is live

Done

- ✓ Every component identified and documented

- ✓ iLO 4 reachable for remote management

- ✓ RAID 5 array built on 3× 1 TB SATA (3 TB total)

- ✓ Proxmox VE 8.2.2 installed on the array

- ✓ Static management IP

10.10.10.10/16oneno1 - ✓ Web UI live at

https://10.10.10.10:8006 - ✓ Internet reachable (DNS

1.1.1.1)

Screenshots captured for the demo

- POST banner showing the RAID 5 array, 1785 error cleared

- ORCA main menu with the logical drive present

- Proxmox installer summary screen

- Proxmox login + node dashboard

Carried into Week 2

- Create

vmbr1(DMZ · 172.16.0.0/24) andvmbr2(LAN · 192.168.0.0/24) - Stand up the Jump Box on

vmbr1 - Deploy Windows + Linux servers on

vmbr2 - Wire NAT and port-forwarding between zones

Bare metal → live hypervisor

HP ProLiant ML350p Gen8 · 1× Xeon E5-2609 v2 · 32 GB DDR3 ECC · RAID 5 on 3× 1 TB SATA (3 TB total)

Proxmox VE 8.2.2 reachable at https://10.10.10.10:8006