What We Built

A hardened small-business datacenter — from bare metal to live hypervisor — in one week.

HP ML350p Gen8

Drives in RAID 5

Total storage

DDR3 ECC RAM

Instructor: Anthony Pena · [email protected]

S/N 2M251705M9 · Product 736983-S01

What we set out to build

🏢 A mini datacenter

One physical server hosting multiple virtual machines — a Windows domain controller, a Linux web stack, a database, a jump box.

🌐 A segmented network

Three zones: management, DMZ, and private LAN — isolated by firewall rules on the Proxmox host itself.

📋 Proof it works

Hardware documentation, asset tracking, a Packet Tracer diagram, and a live walkthrough on demo day.

This deck shows what we accomplished through end of Week 1 and what's coming next.

HP ProLiant ML350p Gen8 tower server

As delivered

| Manufacturer | HP |

| Model | ProLiant ML350p Gen8 |

| Product ID | 736983-S01 |

| Serial # | 2M251705M9 |

| Form factor | 5U Tower (rack convertible) |

| BIOS | P72 · 08/02/2014 |

| Chassis features | Hot-plug drives, redundant PSU, hot-plug fans |

| Management | iLO 4 (dedicated RJ-45) |

Why this platform

- Enterprise-grade — hot-plug drives, ECC RAM, redundant PSUs — ideal for a 24/7 lab

- iLO 4 lets us install and operate without touching the physical machine

- Smart Array P420i is hardware RAID — faster and more forgiving than software RAID

- 4× 1 GbE onboard NICs give us room to segment traffic

- Two CPU sockets and 24 DIMM slots = headroom for future expansion

What's inside

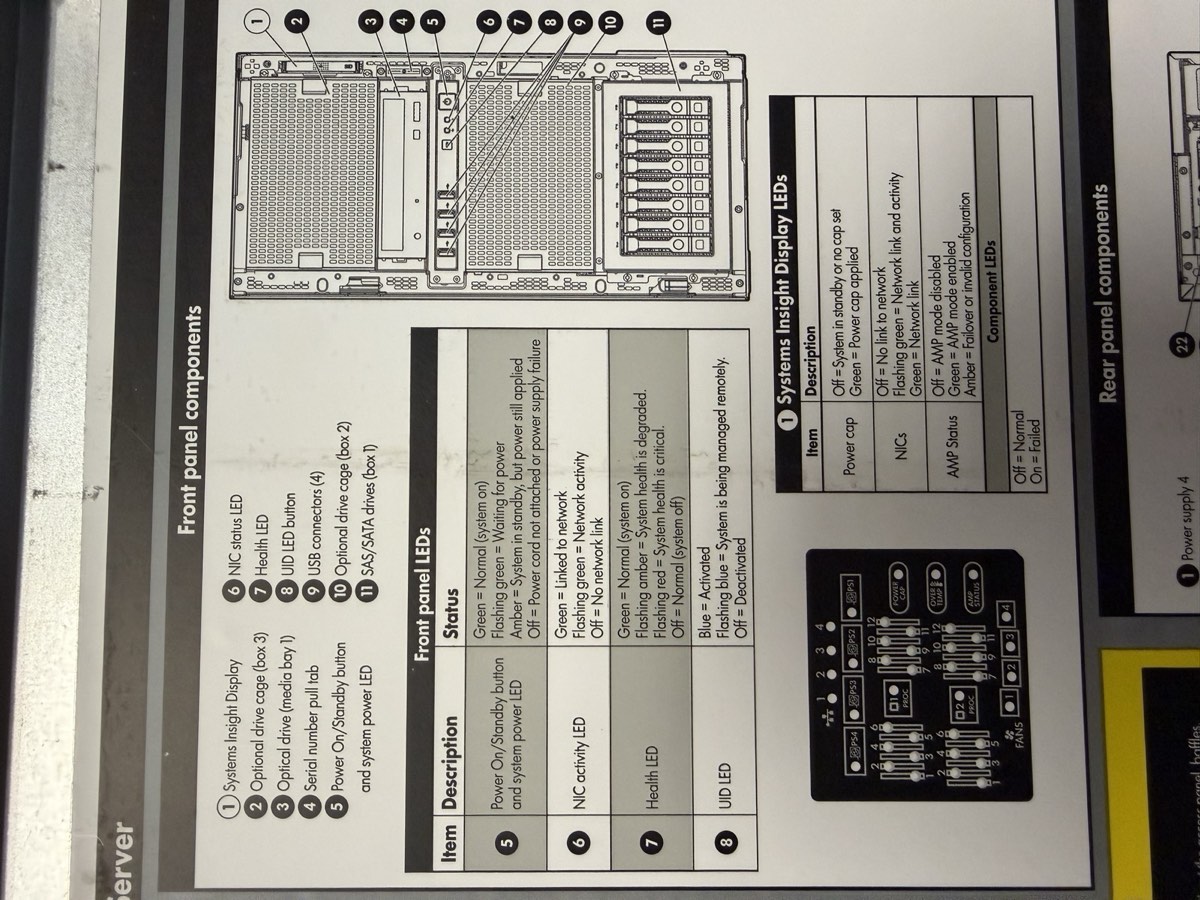

Front panel — Systems Insight Display, drive cage, USB, serial pull-tab, power button

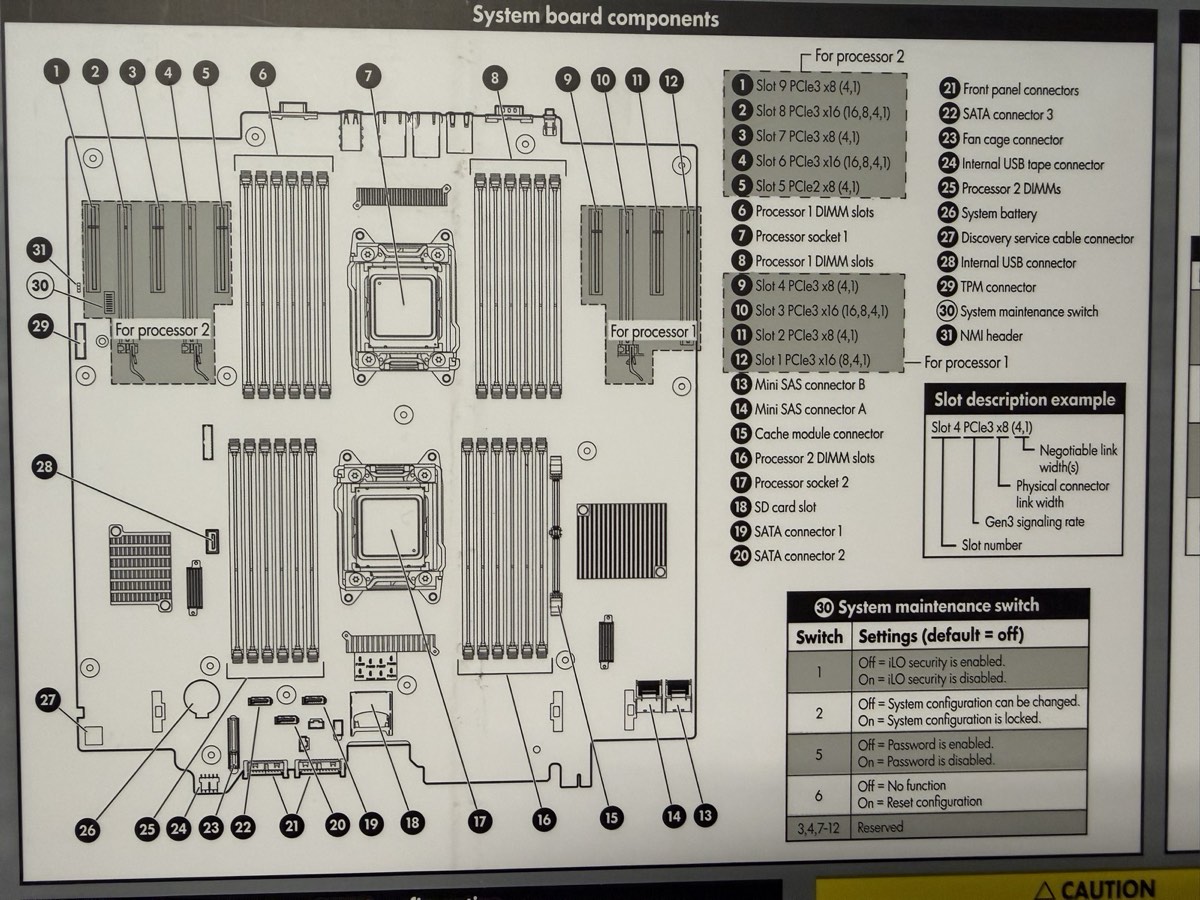

System board — 2 CPU sockets, 24 DIMM slots, P420i RAID, PCIe risers

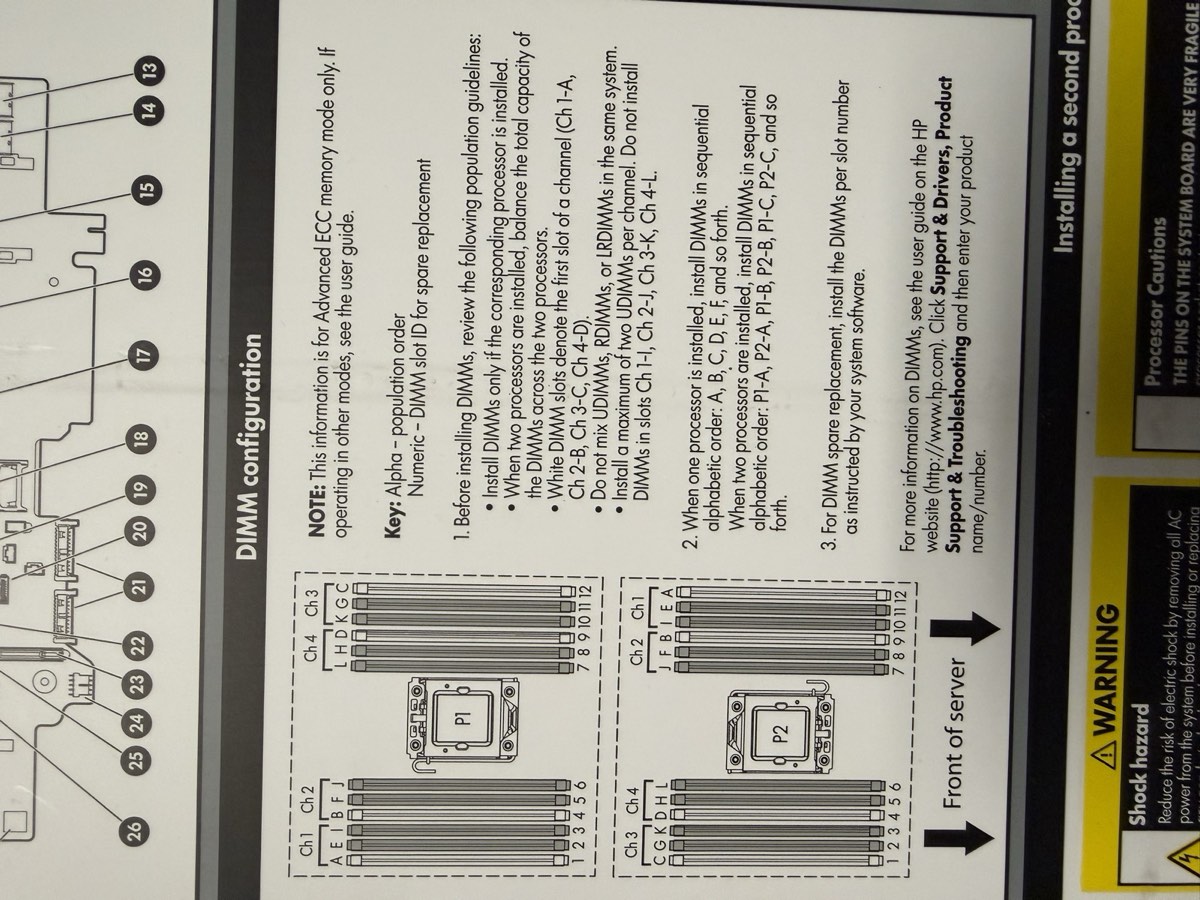

DIMM population chart — white slots first, matched pairs, balance across channels

Matched every part to a datasheet

RAM · 4× 8 GB

SK Hynix HMT41GR7BFR4A-PB · PC3L-12800R · DDR3L-1600 ECC RDIMM · 1.35 V

Drive 1 · WD Blue

WD10EZEX · 1 TB · 7200 RPM · 64 MB cache · SATA · 2018

Drive 2 · WD RE3

WD1002FBYS · 1 TB · 7200 RPM · 32 MB cache · SATA · 2010

PSU · 2× 460 W 1+1

HP HSTNS-PL14 · Gold Common Slot · p/n 511777-001

Additional: 1× Xeon E5-2609 v2 (socket 1); 3rd 1 TB drive in bay 3; P420i RAID controller onboard; iLO 4 management

Final hardware spec sheet

Compute

| CPU | 1× Xeon E5-2609 v2 |

| Cores / threads | 4C / 4T (no HT) |

| Base clock | 2.50 GHz (no turbo) |

| L3 cache | 10 MB |

| Socket 2 | empty |

| VT-x / VT-d | ✓ |

Memory

| Installed | 32 GB = 4× 8 GB |

| Type | DDR3L-1600 ECC RDIMM |

| Effective speed | 1333 MT/s (IMC cap) |

| Slots used | 4 of 24 · quad-channel ✓ |

| NUMA nodes | 1 (single CPU) |

Storage

| Controller | Smart Array P420i |

| Firmware | 8.50.66.00 |

| Cache | FBWC capacitor (likely 512 MB) |

| Drives | 3× 1 TB SATA 3.5" (3 TB total) |

| Bay locations | Port 11, Box 1, Bays 1–3 |

Networking

| Onboard NIC | HP 331i (Broadcom BCM5719) |

| Ports | 4× 1 GbE |

| PXE / SR-IOV | ✓ / ✓ |

| Remote mgmt | iLO 4 (dedicated RJ-45) |

Power & Cooling

| PSUs | 2× 460 W 1+1 |

| Model | HP HSTNS-PL14 Gold |

| Input | 100–240 V · 50/60 Hz |

| Fans | 4 hot-plug redundant |

Firmware

| BIOS | P72 · 08/02/2014 |

| Bootblock | 03/05/2013 |

| SATA Option ROM | v2.00.CO2 (2011) |

| NIC Boot Agent | Broadcom NetXtreme (2014) |

| Boot mode | Legacy |

The network we designed

vmbr0 · Management + School LAN

10.10.0.0/16 · GW 10.10.10.1 · iLO + Proxmox UI + updates

vmbr1 · DMZ

172.16.0.0/24 · public-facing · Web + Jump box

vmbr2 · Private LAN

192.168.0.0/24 · trusted · AD + DNS + DHCP + SQL

Why we chose RAID 5

Options considered

| Level | Usable | Protection | Verdict |

|---|---|---|---|

| RAID 0 | 3 TB | None | Rejected |

| RAID 1 (mirror) | 1 TB | 1 fail | Too little space |

| RAID 5 | 3 TB | 1 fail | Chosen ✓ |

| RAID 6 | 1 TB | 2 fails | Needs 4 drives |

Rationale

- 3 drives = RAID 5 is the natural fit — minimum met, best space efficiency (66%)

- Survives 1 drive failure without data loss — matters with our mixed-age drive pool

- 3 TB total comfortably hosts 4 planned VMs plus ISOs + snapshots + backups

- Hardware RAID on P420i is faster than ZFS on this generation — and doesn't need HBA-mode flashing

⚠ Caveat: drives are mismatched — WD Blue (2018, consumer, no TLER) + WD RE3 (2010) + a 3rd. Operate at the slowest member's speed; the oldest drive is statistically most likely to fail first. Weekly SMART checks recommended.

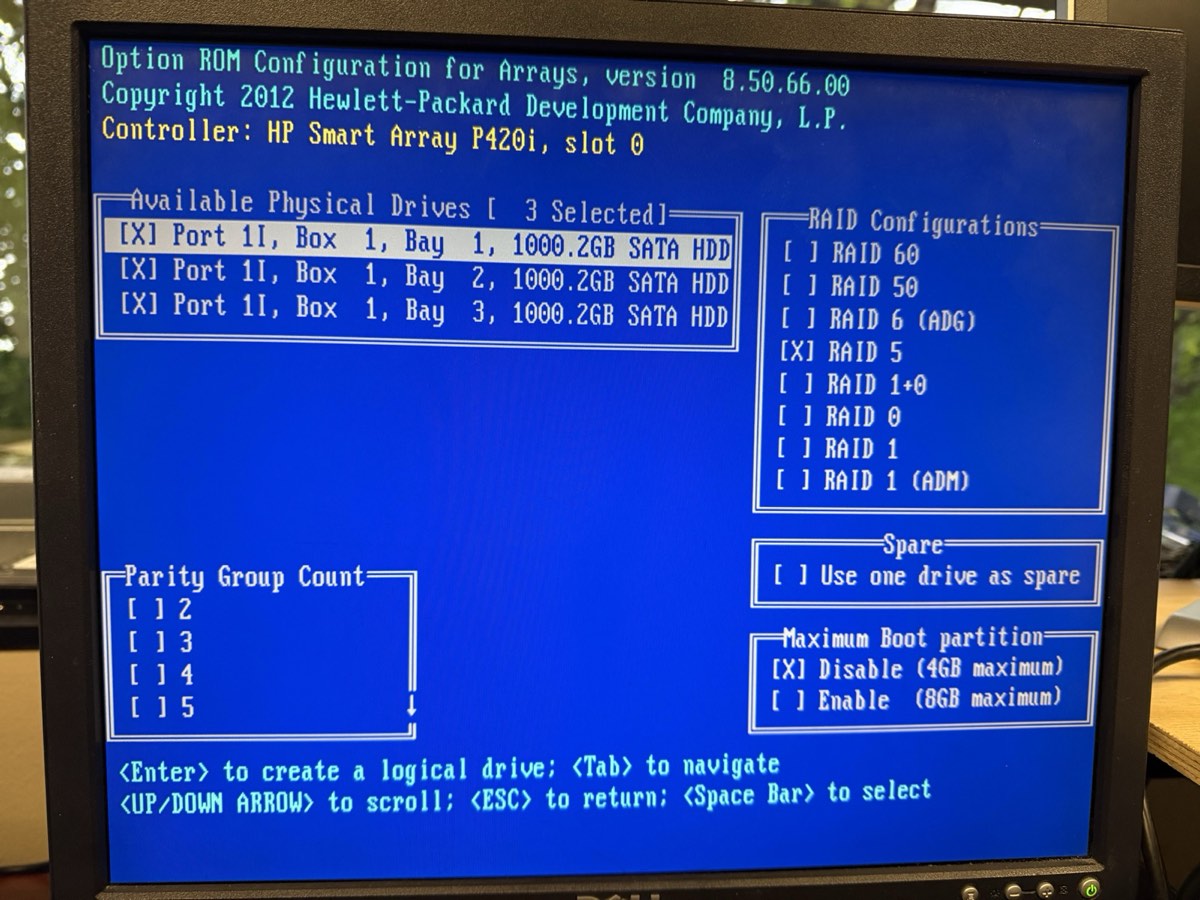

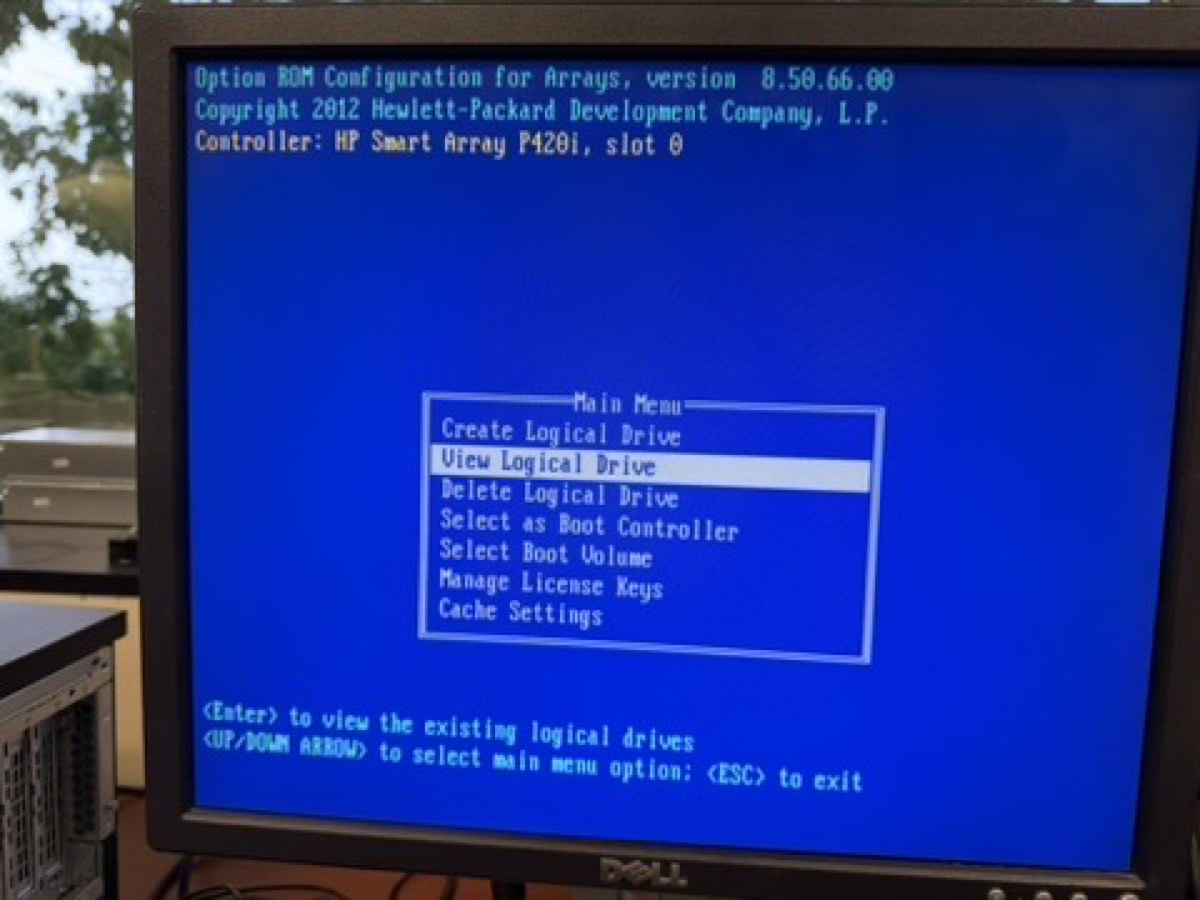

Built the RAID 5 array in ORCA

3 drives marked [X] · RAID 5 selected · Max Boot Partition disabled (4 GB)

What we pressed

- 1Rebooted, watched POST for the Smart Array banner

- 2Pressed F8 to enter ORCA (Option ROM Configuration for Arrays)

- 3Chose Create Logical Drive

- 4Pressed Space on each of 3 drives at Port 11, Box 1, Bays 1–3

- 5Selected RAID 5 · defaults: 256 KiB stripe, Accelerator Enable

- 6Pressed Enter to commit, F8 to save

- 7Exited and rebooted

Result: 1 logical drive · 3 TB total (one drive's capacity reserved for parity) · parity initialization began in the background.

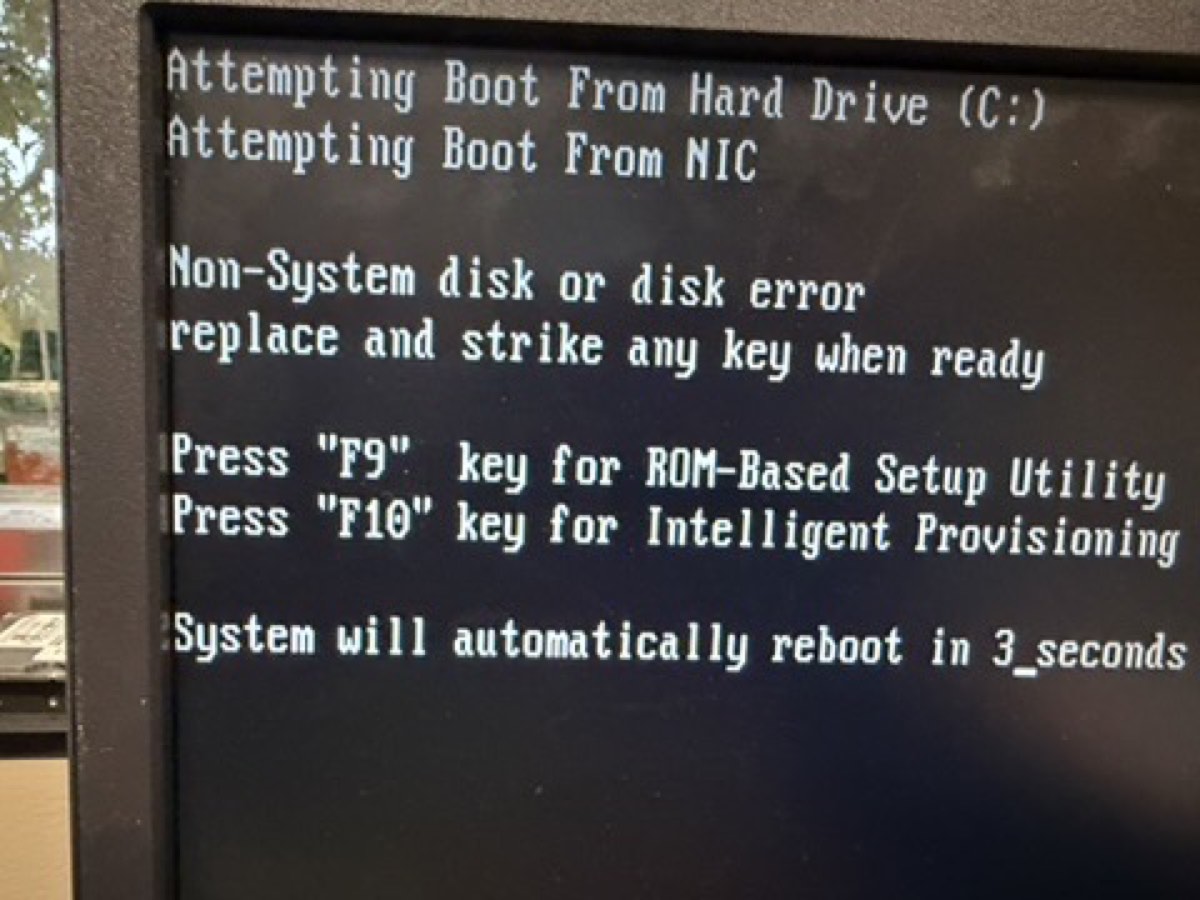

POST confirmed the array

↑ ORCA main menu after creation — "View Logical Drive" is now selectable, which means an array exists ✓

↑ Next boot: "Non-System disk" — exactly what we wanted. Server sees the array as C:, just no OS yet. The 1785 "array not configured" error is gone.

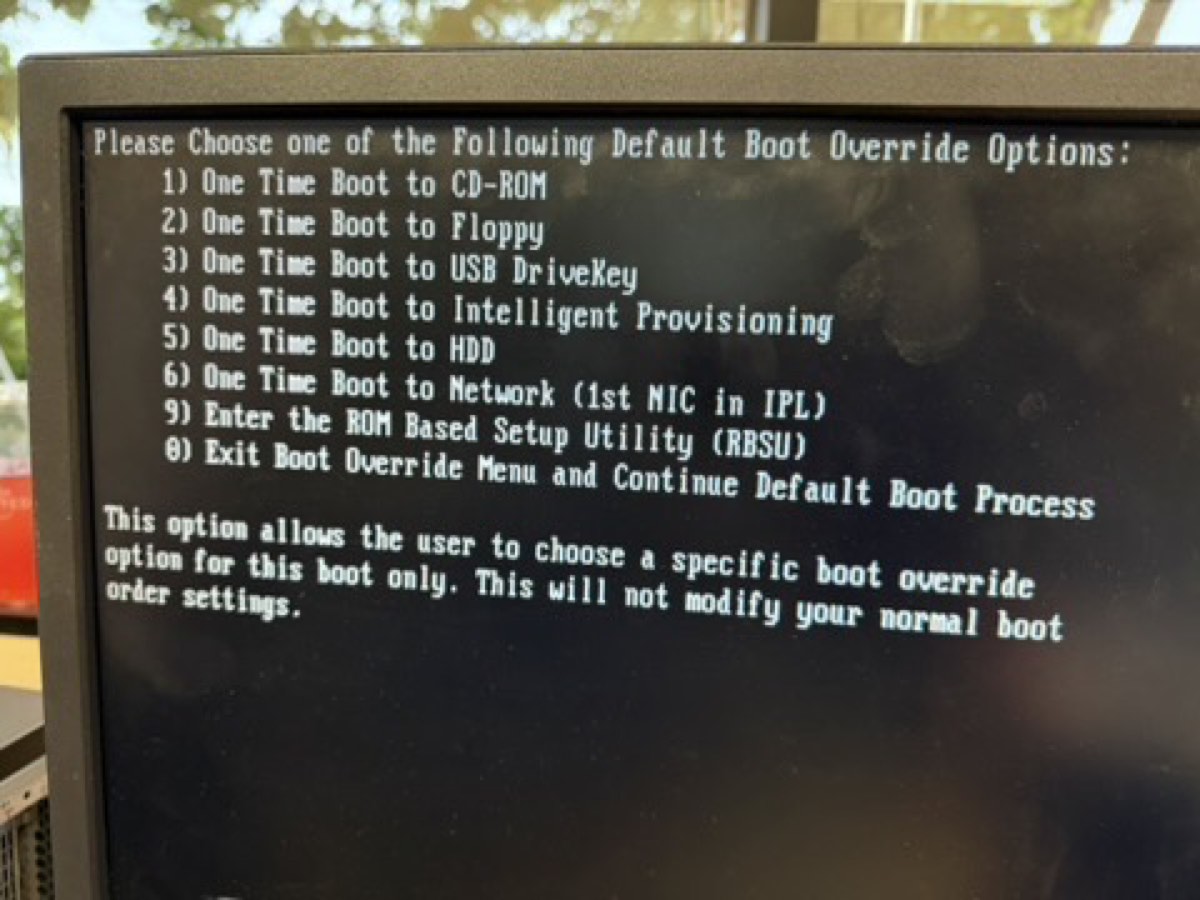

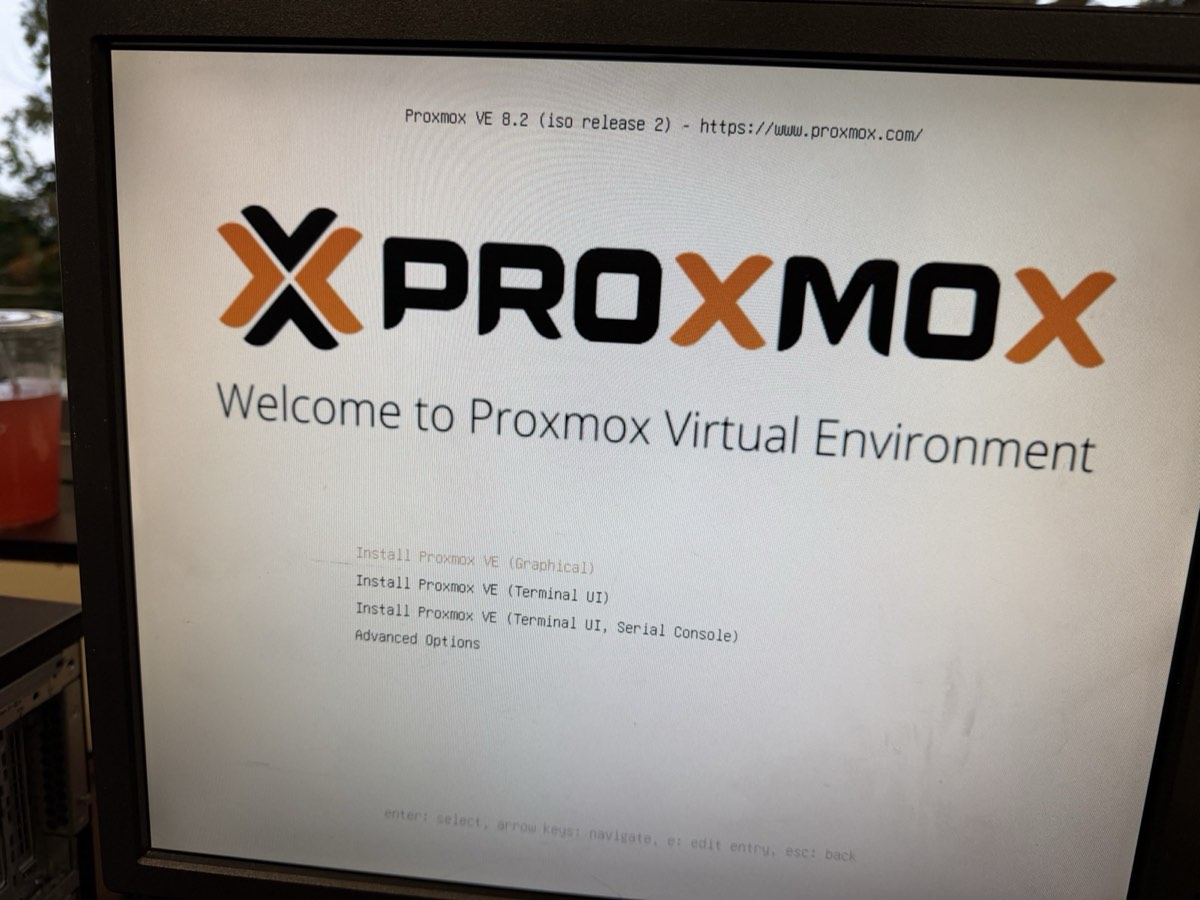

Booted Proxmox VE 8.2.2 via iLO virtual media

F11 at POST → option 1 (CD-ROM) — iLO serves the ISO as a virtual CD

Proxmox installer loaded — picked "Install Proxmox VE (Graphical)"

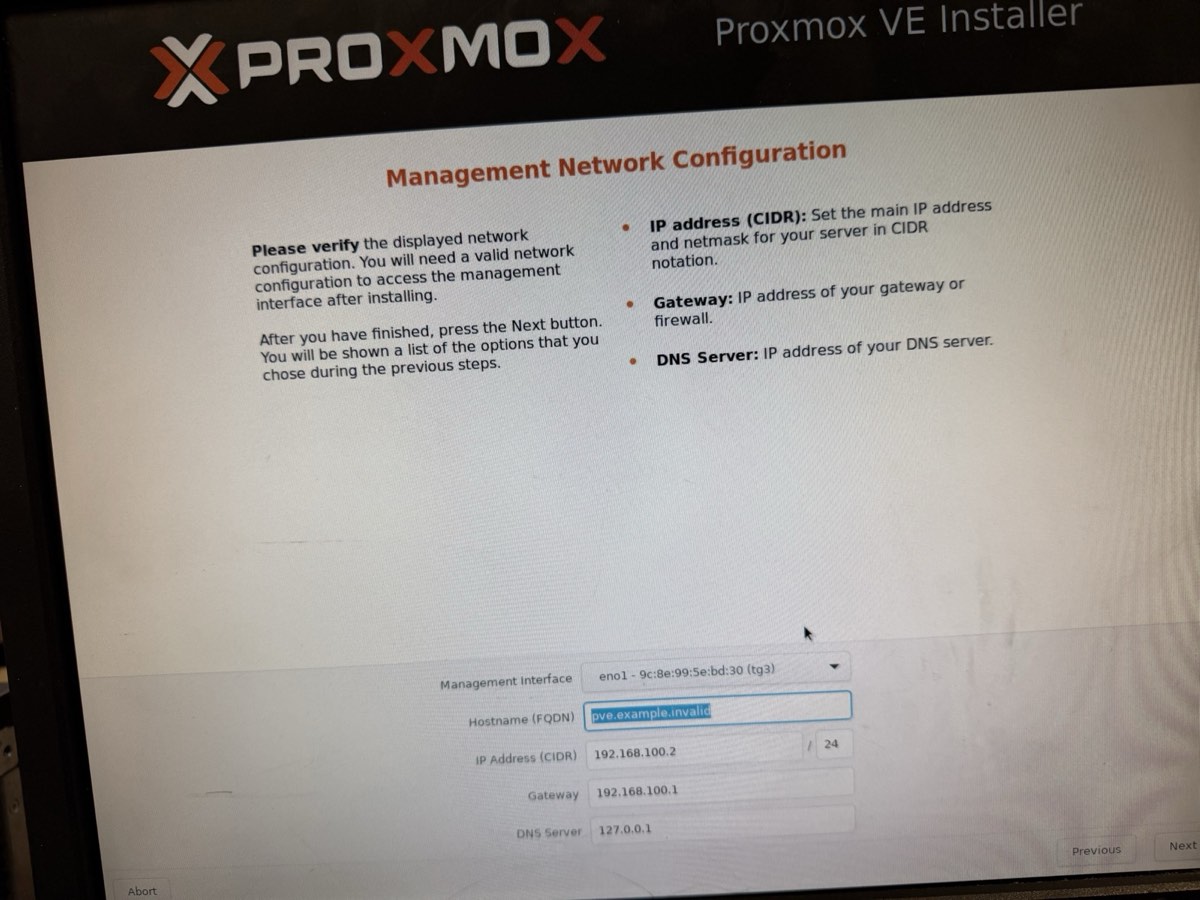

Management network configured on eno1

No USB stick needed — iLO's virtual media mounted the ISO straight from a laptop browser.

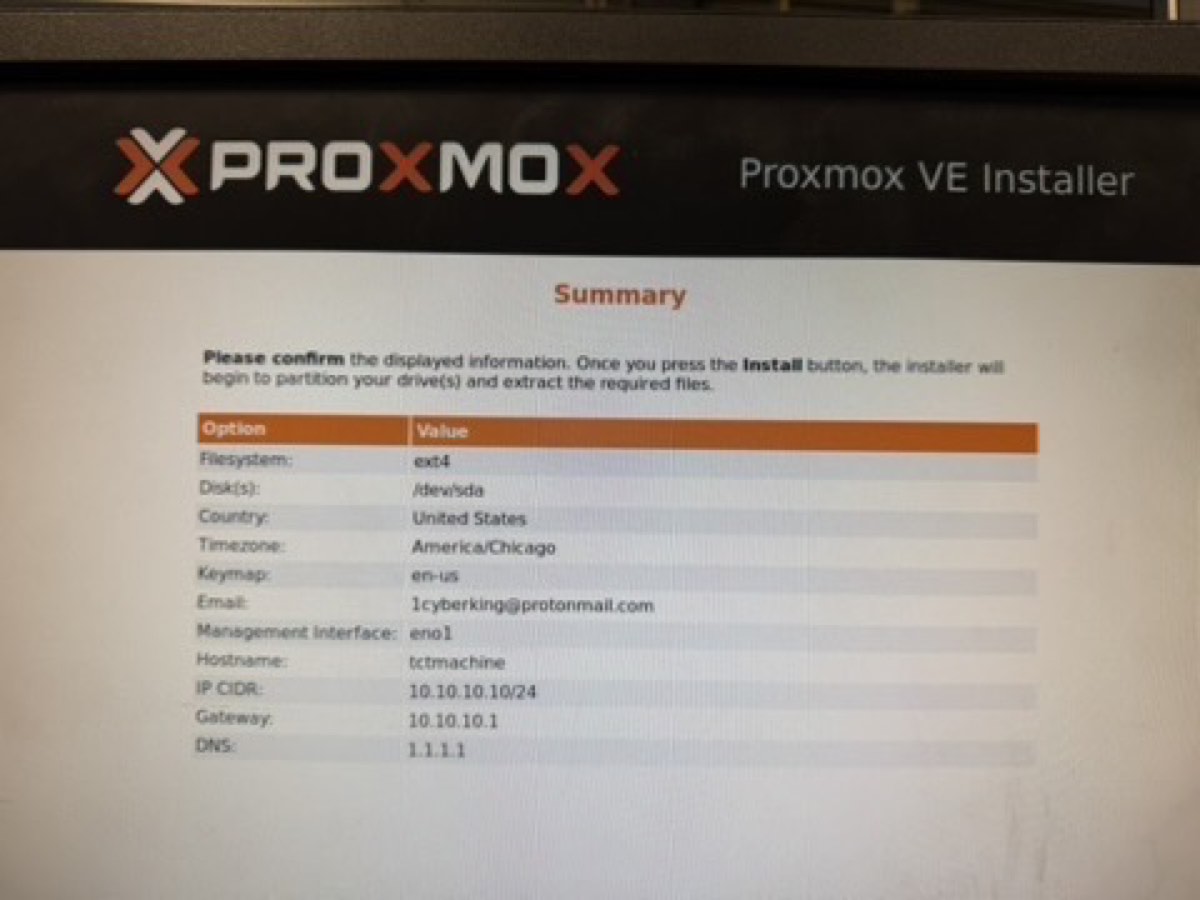

Final installer summary — then clicked Install

What we installed with

| Filesystem | ext4 on /dev/sda |

| Target disk | 3 TB RAID 5 array |

| Country / TZ | United States / America/Chicago |

| Management NIC | eno1 |

| Hostname | tctmachine |

| IP (CIDR) | 10.10.10.10/16 |

| Gateway | 10.10.10.1 |

| DNS | 1.1.1.1 (Cloudflare) |

Install ran ~5 minutes, unmounted the virtual ISO, rebooted — Proxmox came up on the first try.

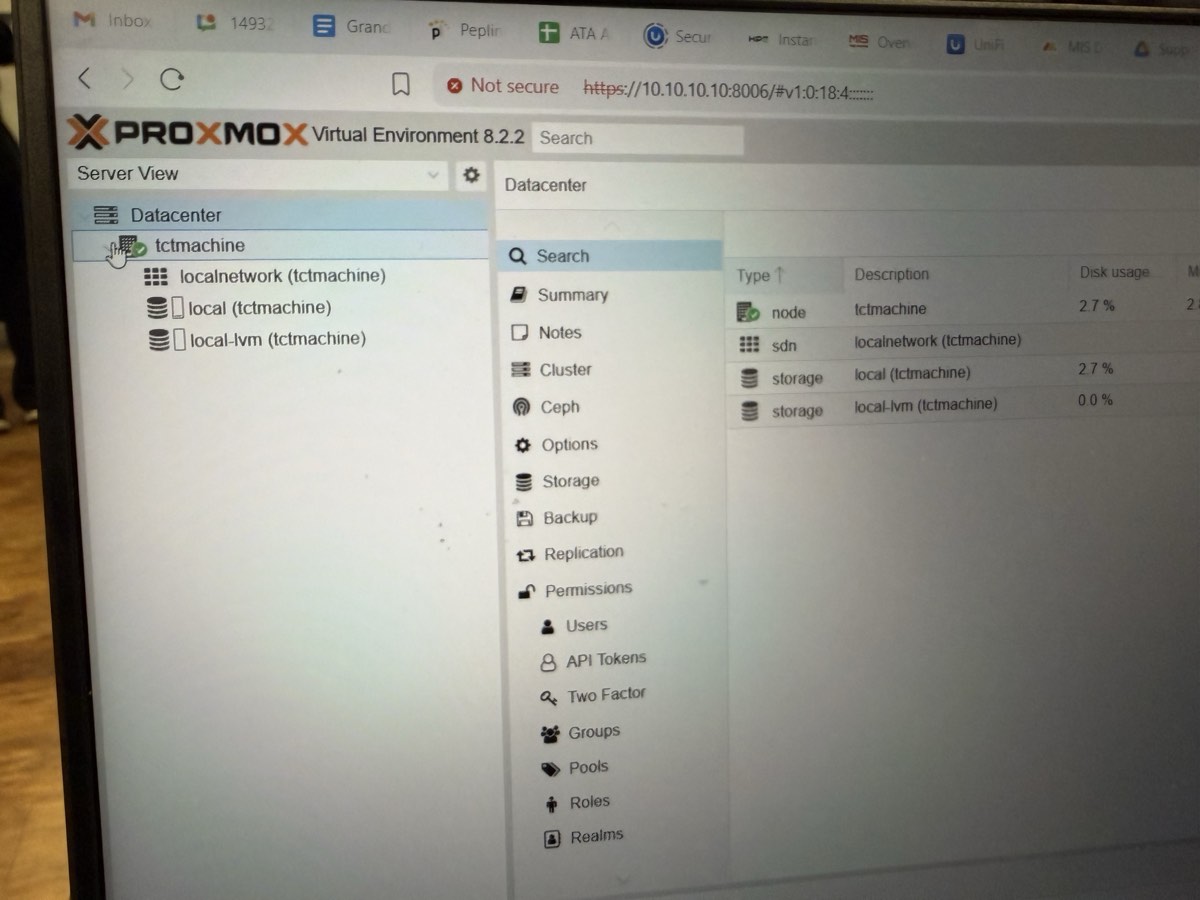

Web UI reached from a browser

↑ https://10.10.10.10:8006 from a laptop on the school LAN. Logged in as root.

What the dashboard confirmed

- ✓ Proxmox VE 8.2.2 running

- ✓ Node

tctmachineonline under Datacenter - ✓

localnetworkSDN exists - ✓

localstorage mounted · 2.7% used (base system) - ✓

local-lvmthin pool ready · 0.0% used (awaiting VMs) - ✓ Authentication, TLS, web stack all functional

What this means

Every layer from iLO up through Proxmox UI is working. The 3 TB RAID 5 array is exposed as local (host root + ISOs) and local-lvm (VM disks). Ready to create bridges and VMs next.

Proxmox VE 8.2.2 is live

Week 1 ✓ complete

- ✓ Every component identified and documented

- ✓ iLO 4 configured for remote management

- ✓ RAID 5 array built on 3× 1 TB SATA (3 TB total)

- ✓ Proxmox VE 8.2.2 installed on the array

- ✓ Management IP

10.10.10.10/16oneno1 - ✓ Web UI live at

https://10.10.10.10:8006 - ✓ Internet reachable (DNS

1.1.1.1)

Proof — screenshots captured

- POST banner with RAID 5 present + 1785 error cleared

- ORCA main menu showing the logical drive

- Proxmox installer summary

- Proxmox login / dashboard

Known follow-ups

- Rename host →

pve01.capstone.local(hostnamectl) - Run

apt update && full-upgrade - Enable no-subscription repo

- Record FBWC cache size + capacitor status

- Weekly SMART check on all 3 drives (WD RE3 is 15 yrs old)

What we're deploying and where

🎯 Objective

Stand up the core services every downstream week depends on — Windows DNS/DHCP/IIS + Linux NGINX/MariaDB — on a NAT-bridged network.

The 4 phases

- Phase 0 · 🌐 verify NAT bridge + Jump Box + internet

- Phase 1 · 🪟 Windows DNS · DHCP · IIS welcome page

- Phase 2 · 🐧 Linux NGINX · MariaDB

capstone_db - Phase 3 · 🌐 cross-VM ping, nslookup, DHCP lease tests

Week 2 IP plan · 3 bridges live

| Bridge | Subnet | Host IP |

|---|---|---|

vmbr0 mgmt | 10.10.0.0/16 | 10.10.10.10 |

vmbr1 DMZ | 172.16.0.0/24 | 172.16.0.10 |

vmbr2 LAN | 192.168.0.0/24 | 192.168.0.10 |

VM static IPs

| Windows Server | 192.168.0.2 (vmbr2) |

| Linux Server | 192.168.0.3 (vmbr2) |

| DMZ Web / Jump | 172.16.0.10–20 (vmbr1) |

| DHCP scope | 192.168.0.50 – .100 |

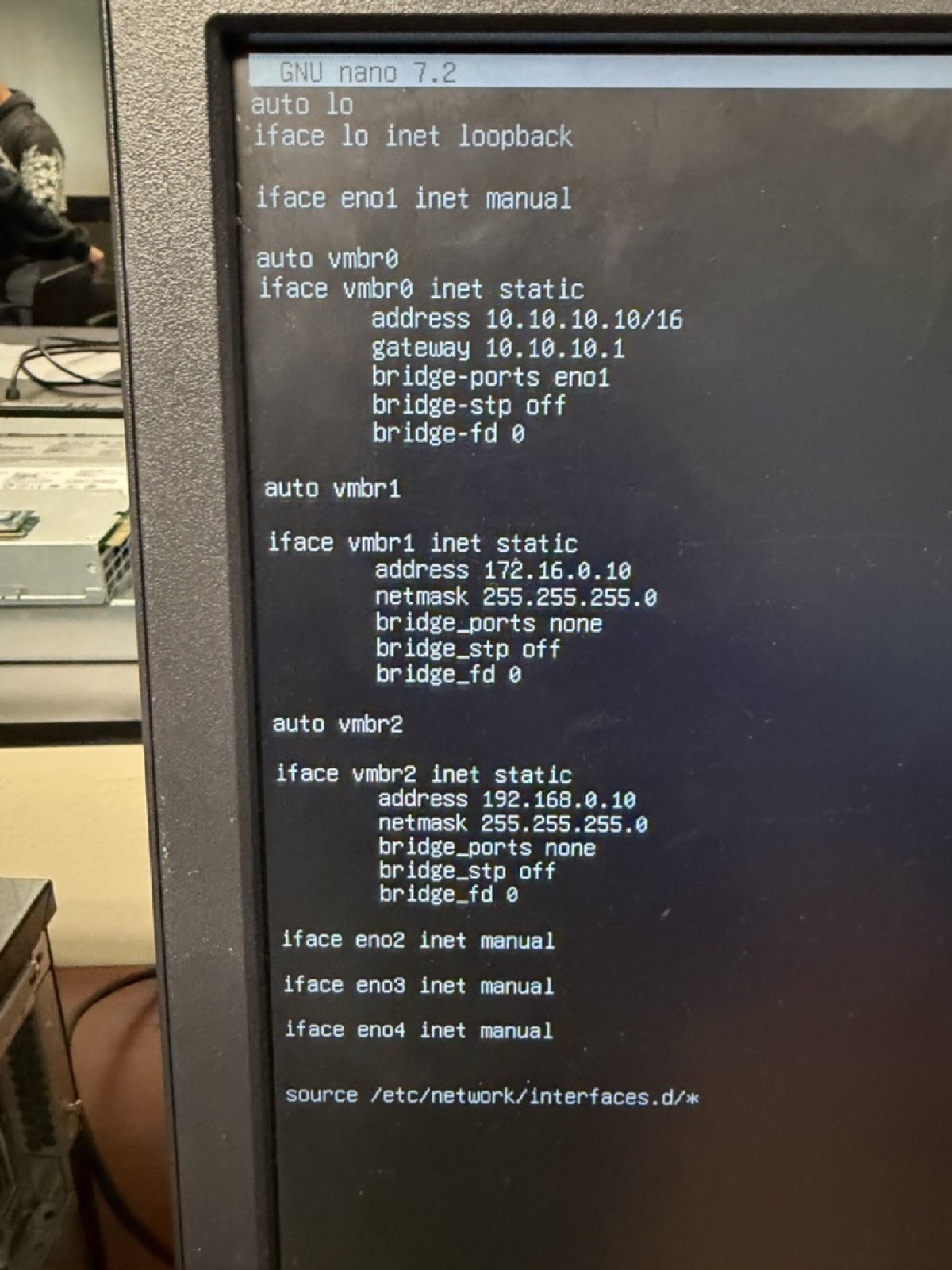

Three bridges on the Proxmox host

✓ Typo fixed — iface vmbr2 inet static. Proxmox GUI shows vmbr0/1/2 all Active=Yes, Autostart=Yes.

Correct config

auto vmbr0

iface vmbr0 inet static

address 10.10.10.10/16

gateway 10.10.10.1

bridge-ports eno1

bridge-stp off

bridge-fd 0

auto vmbr1

iface vmbr1 inet static

address 172.16.0.10

netmask 255.255.255.0

bridge-ports none

bridge-stp off

bridge-fd 0

auto vmbr2

iface vmbr2 inet static

address 192.168.0.10

netmask 255.255.255.0

bridge-ports none

bridge-stp off

bridge-fd 0

After saving: ifreload -a — verify with ip addr show vmbr1 and ip addr show vmbr2.

Verify bridges & internet

Ping tests (from Proxmox host)

# self-tests — bridges are up? ping -c 2 172.16.0.10 # vmbr1 self ping -c 2 192.168.0.10 # vmbr2 self # once VMs exist: ping -c 4 192.168.0.2 # Win VM (vmbr2) ping -c 4 192.168.0.3 # Linux VM (vmbr2) ping -c 4 172.16.0.10 # DMZ Jump (vmbr1)

| From → To | Record |

|---|---|

| Host → Win VM | ___ ms |

| Host → Linux VM | ___ ms |

| Host → DMZ | ___ ms |

Outbound NAT (to internet)

Windows VM cmd:

ping 8.8.8.8

expect < 30 ms

Linux VM bash:

curl https://ifconfig.me

→ returns school public IP

If fails: confirm IP forwarding + iptables MASQUERADE on vmbr0 egress, VMs have correct gateway (192.168.0.10 for LAN, 172.16.0.10 for DMZ).

Windows Server roles: DNS + DHCP

Install DNS + create zone

- Server Manager → Add Roles → DNS Server

- Tools → DNS → right-click Forward Lookup Zones → New Zone

- Primary zone · name

teamx.local - Right-click zone → New Host (A):

namewinserver· IP192.168.0.2· ✓ create PTR

nslookup winserver.teamx.local

Address: 192.168.0.2

Install DHCP + create scope

- Server Manager → Add Roles → DHCP Server → complete wizard

- Tools → DHCP → IPv4 → right-click → New Scope

| Name | CapstoneScope |

| Range | 192.168.0.10 – .100 |

| Mask | 255.255.255.0 |

| Gateway | 192.168.0.1 |

| DNS | 192.168.0.2 |

| Suffix | teamx.local |

Right-click scope → Activate. Test from a client: ipconfig /release && /renew.

IIS — publish the welcome page

Install + deploy

- Server Manager → Add Roles → Web Server (IIS)

- File Explorer →

C:\inetpub\wwwroot\ - Delete

iisstart.htm+iisstart.png - Right-click → New → Text Document → paste HTML →

- Save As… → type All Files →

index.html

<html>

<body>

<h1>Welcome to Week 2!</h1>

</body>

</html>

Test from a client

# by IP http://192.168.0.2 # by DNS hostname http://winserver.teamx.local

Both should render Welcome to Week 2! as an H1.

📸 Screenshot the browser showing the welcome page — URL bar must be visible.

Troubleshoot: Windows Firewall → allow HTTP (port 80) if the page can't be reached remotely.

NGINX — install & publish

Install & enable

sudo apt update

sudo apt install nginx -y

sudo systemctl enable nginx

sudo systemctl start nginx

sudo systemctl status nginx

● nginx.service - active (running)

Deploy the page

echo "<h1>Welcome to Linux Week 2</h1>" \ | sudo tee /var/www/html/index.html cat /var/www/html/index.html

Test from another VM

# from Windows or Jump Box browser http://192.168.0.3

📸 Screenshot the browser showing the rendered heading.

If blocked: sudo ufw allow 80/tcp · verify IP is .3 · gateway .1.

MariaDB — create the capstone database

Install + launch

sudo apt install mariadb-server -y

sudo systemctl enable mariadb

sudo systemctl start mariadb

# optional hardening

sudo mysql_secure_installation

sudo mysql

Create DB + user + grant

CREATE DATABASE capstone_db; CREATE USER 'capuser'@'localhost' IDENTIFIED BY 'securepass'; GRANT ALL PRIVILEGES ON capstone_db.* TO 'capuser'@'localhost'; FLUSH PRIVILEGES; EXIT;

Verify

mysql -u capuser -p -e "SHOW DATABASES;"

| capstone_db |

📸 Screenshot the SHOW DATABASES; output with capstone_db visible.

Verify everything end-to-end · write the report

Ping both directions

# Win cmd ping 192.168.0.3 # Linux bash ping -c 4 192.168.0.2

DNS lookup

nslookup \

winserver.teamx.local

→ 192.168.0.2

DHCP lease

New client VM → DHCP → record the leased IP from Win DHCP Manager.

📸 5 mandatory screenshots

- DNS zone + A record

- DHCP scope + active lease

- IIS page in browser

- NGINX page in browser

- DB CLI:

SHOW DATABASES;

📄 Week 2 Report

- Cover: week, team, roles

- Phase 0 tables filled

- Test summary table w/ Pass/Fail

- 5 screenshots (above)

- Reflection ×3 (trickiest test, longest service, unresolved issues)

- Asset tracker export (VMs · IPs · roles)

Full walkthrough: week2.html

Jump Box, DNAT port-forward, reverse routes

🛡 Jump Box VM — on vmbr1

- Ubuntu Server · 2 vCPU · 2 GB · 25 GB

- Static

172.16.0.x, gw172.16.0.1 - Hardened SSH: non-root user,

PermitRootLogin no ufwallow 22/tcp from10.10.10.0/24,172.16.0.0/24,192.168.0.0/24- DNS for all internal VMs = Windows Server IP

🔁 Reverse route — on internal VMs

# Windows (admin PowerShell) route add 172.16.0.0 mask 255.255.255.0 192.168.0.1 # Linux sudo ip route add 172.16.0.0/24 via 192.168.0.1

🔀 Host iptables — DNAT + persistence

# SSH port-forward: WAN :2222 → Jump Box :22 iptables -t nat -A PREROUTING -i vmbr0 \ -p tcp --dport 2222 \ -j DNAT --to-destination 192.168.0.2:22 iptables -A FORWARD -p tcp -d 192.168.0.0/24 \ --dport 22 -j ACCEPT iptables -A INPUT -p tcp --dport 2222 -j ACCEPT # Persist across reboots apt install iptables-persistent netfilter-persistent save # or: iptables-save > /etc/iptables/rules.v4

MASQUERADE for 172.16.0.0/24 and 192.168.0.0/24 out vmbr0 already set in /etc/network/interfaces as post-up lines.

Test: from laptop on school LAN → ssh -p 2222 [email protected] → lands on Jump Box at 192.168.0.2.

Jump Box install — values that worked

Proxmox Create VM

| VM ID | 101 |

| Name | jumpbox |

| ISO | ubuntu-24.04.1-live-server-amd64.iso |

| Disk | 25 GB on local-lvm |

| CPU | 1 socket × 2 cores, type host |

| RAM | 2048 MB |

| Bridge | vmbr1 (DMZ), VirtIO |

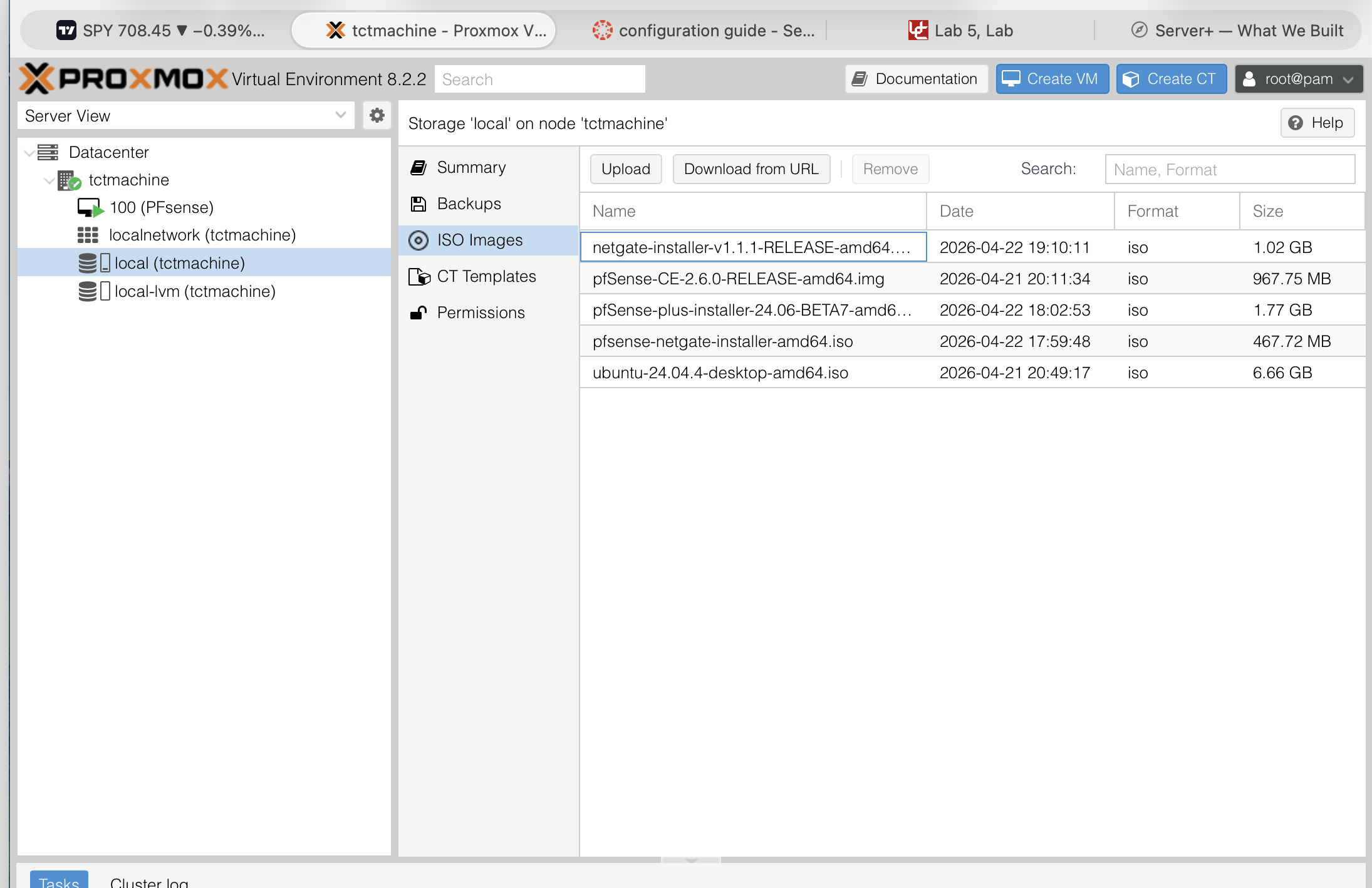

ISO Images storage view (Ubuntu Desktop was already there; Server downloaded next)

Ubuntu installer · Network

| Method | Manual |

| Subnet | 172.16.0.0/24 |

| Address | 172.16.0.2 |

| Gateway | 172.16.0.10 (Proxmox host) |

| DNS | 1.1.1.1, 8.8.8.8 |

Profile

| Server name | jumpbox |

| Username | jumpadmin |

| SSH | ✓ Install OpenSSH server |

⚠ Ubuntu installer's autoconfig fails on vmbr1 (no DHCP) — that's expected. Set static manually.

Weeks 3 & 4

Services depth

- Promote Windows → AD DC (

capstone.local) - Move DNS + DHCP under AD integration

- Install SQL Server Express (Windows) / confirm MariaDB connected

- Create file shares with NTFS permissions

- Ship a baseline GPO (password policy, screen lock)

- Configure Windows Backup

Harden & demo

- Tighten firewall rules: default-deny + explicit allow

- Run vulnerability scan, fix findings

- Test a real backup restore — not just "it ran"

- Break something, restore it, document the recovery

- Rehearse the demo walkthrough twice

- Sealed runbook with every password + recovery plan

4 VMs sized for 32 GB / 4 cores

| VM | Purpose | OS | vCPU | RAM | Disk | Bridge | IP |

|---|---|---|---|---|---|---|---|

| VM1 | AD / DNS / DHCP / IIS | Windows Server 2019 | 2 | 8 GB | 80 GB | vmbr2 | 192.168.0.10 |

| VM2 | SQL Server Express | Windows Server 2019 | 2 | 6 GB | 80 GB | vmbr2 | 192.168.0.20 |

| VM3 | Apache + MySQL web | Ubuntu 22.04 LTS | 2 | 4 GB | 40 GB | vmbr1 | 172.16.0.10 |

| VM4 | Jump box / gateway | Windows / Ubuntu | 1 | 2 GB | 30 GB | vmbr1 | 172.16.0.20 |

| Totals | 7 | 20 GB | 230 GB | Leaves ~10 GB RAM for host + ARC | |||

⚠ No Hyper-Threading on E5-2609 v2 — 7 vCPU on 4 real cores = ~1.75× over-subscription, acceptable for lightly-loaded services. Keep CPU-heavy workloads off the same host.

What the instructor gets

Documentation

- Week 1 report form — all fields filled with real values

- Server Hardware Discovery Sheet — 12 sections, completed

- IT Asset Tracking spreadsheet — Vendors · Hardware · Software tabs

- Network diagram (Cisco Packet Tracer)

- This slide deck — presentation for demo day

Live systems

- Proxmox VE host reachable at

https://10.10.10.10:8006 - iLO 4 remote management on dedicated RJ-45

- RAID 5 healthy with active parity protection

- Coming: running AD / DNS / SQL / web VMs

- Coming: firewall-enforced zone separation

Observations worth calling out

Hardware

- HP POST uses different keys than Dell — F8/F9/F10/F11, not F2/F12

- E5-2609 v2 has no Hyper-Threading and no Turbo — 4 cores is the ceiling

- 1 CPU means half the DIMM slots and PCIe risers are inactive

- 4× DIMMs across 4 channels = optimal quad-channel balance for 1 CPU ✓

Process

- iLO 4 virtual media removed the need for USB installers — huge time saver

1785-Drive Array Not Configuredis expected before RAID is built — not an error- RAID 5 needs ≥3 drives — the controller greys it out below minimum

- Proxmox accepts single-label hostnames but FQDN

pve01.capstone.localis the convention - Drive mismatch (consumer WD Blue + 2010 WD RE3) is a reliability risk — plan to replace before production

Cheat sheet — the things we'll forget

Boot keys (HP ML350p Gen8)

| F9 | System Utilities / RBSU (BIOS) |

| F10 | Intelligent Provisioning (HP installer) |

| F11 | One-time boot menu |

| F8 | Smart Array ORCA (RAID config) |

Key URLs

| iLO 4 | https://<iLO-IP> |

| Proxmox UI | https://10.10.10.10:8006 |

IP plan

| Zone | Subnet | Gateway |

|---|---|---|

| Mgmt (vmbr0) | 10.10.0.0/16 | 10.10.10.1 |

| DMZ (vmbr1) | 172.16.0.0/24 | host |

| LAN (vmbr2) | 192.168.0.0/24 | 192.168.0.1 |

POST error codes

1785drive array not configured → build the array1779capacitor charging → wait ~5 min1794battery < 75% capacity → replace soon1797battery failure → replace now

Questions?

Live server: https://10.10.10.10:8006

HP ProLiant ML350p Gen8 · 1× Xeon E5-2609 v2 · 32 GB DDR3 ECC · RAID 5 on 3× 1 TB SATA (3 TB total)

Proxmox VE 8.2.2 · 2-bridge iptables topology · Cybertex Austin